- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Re: Churn Playbook - Interpretation

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Churn Playbook - Interpretation

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Churn Playbook - Interpretation

I want to implement the churn case outlined in this great article, but im curious , and a bit confused, about the thought process behind a few things.

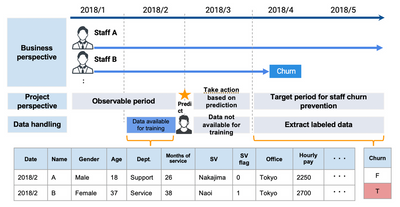

With regards to the definition of churn, they decided to use the information , whatever that may be, contained within the months of April & May to help define churn. Here is the diagram they proposed to help visualize the whole deal:

1. If the problem statement mentions "within 2 months", why the fixation on April & May? Can't we use any 2 months of churn within the total modeling period just for the purpose of defining the target? Reason I ask is, what would happen if the individual Churned on 2018/3 rather than 2018/4 (assuming they stayed churned)? We'd be defining that individuals churn practically a month after it happened.

Besides, seeing that the COG is on 2018/3, if a person churns in that time, do we just completely ignore it?

2. If we continue to assume that a certain individual churned in 2018/3, then how would the outcome label, bearing in mind definition of churn for that individual (which in this case would be "Churn"), differ if we were to change the definition of churn in saying within 3 months rather than 2 months?

It looks like it wouldn't change right? It would still be "Churn". So what's to say that this problem is a "within 2 months" problem and not "within 3 months"? In other words, if we were to get an output likelihood, let's say of 0.78, what's stopping me from also interpreting this as a likelihood of churning within 3 months?

3. The prediction point was defined to be after the month February, but does that prediction date bear any importance at deployment? Or is it just for illustrative, and target-defining, purposes?

4. Assuming the decision makers will not want to wait a period equal to the observable period to amass cumilative information about each employee, they will most probably use whatever data they have on an employee, at hand, to predict how likely this person will churn in the next two months. However, wouldn't that just produce a much less accurate result since the model was trained on receiving an aggregate of 2 months worth of information for each employee?

Any clarifications are much appreciated!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Thanks for the link to the article was a great guide. The way I understand, the 'observation period' data is aggregated for prediction, this would mean if a person churned in the first month there would not be all the required data to aggregate into features for them. With missing values we shouldn't include them in the dataset for the same reasons outline by Chad when looking at FDW Size.

If a person churned within the 3rd month then there was not enough time to take preventative action. They shouldn't be included in the dataset as you would need a minimum of three months data preceding them to predict their churn.

The reason for the 3 month minimum (two month observation + one month COG) is we don't want a model which has been trained to understand what churning at three moths (within one month of prediction) looks like, as that information is useless we cant act on it.

From that the prediction point is only relevant to having the 2 months of data preceding and it only predicting between 2-3 months (excluding first month) into the future for churn.

Decision makers may not want to wait 2 months before having anything to act on. This model however shouldn't be used for such predictions so perhaps another model could be used, trained on a smaller observation window?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Its a great point you highlight I didn't consider when reading the article that you would need to discard rows with churn in the third month.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Well, the way I see it is that I don't think the 3 month window was to be some hard limit for the amount of data each person should have to "be included" in the churn use case. I think that this was just a periof of time we decided to focus our eyes on, and see who, and how much, data exists for any one individual up until they churn.

So referencing that same person who churns in the first month, I would say any data that person has, up till the month they churned, will be used regardless of whether it was 3 months of data or not. The 3 months here was supposedly, just an observation period.

As for the size of the FDW, after talking to a few people about this use case, it seems that they do follow the idea of using aggregate data for prediction. However, in deployment, they do not wait the full time taken during training for the aggregation of data. Instead they use whatever they have at hand.

So that got me thinking whether the model is purely trying to understand those interactions between the features and the target or instead is trying to understand the interactions between the features and the target in the context of aggregated data? I presume the latter.

There are just many edge cases, that I feel go over my head regarding this use case

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Exactly, if we do end up dropping all the rows in COG, then according to that logic someone who has churned in the third month but came back in the fourth month has technically not churned, which I think should be a problem?

Edit: I think the reason they might not care about churn on the third month is because of how they wouldn't enough time to "save" that customer anyway as you pointed out

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

yeah I suppose, depends how you see it. I would have thought adding just as a new entry when they return would be fine, in the context of employees leaving this wouldn't be a hugely common event. Also not sure if the gap is called COG (maybe it is, not sure?) as this problem is not a time series.