- Community

- :

- Connect

- :

- Product Support

- :

- Cross Validation Modeling in DR

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Cross Validation Modeling in DR

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Cross Validation Modeling in DR

According to the video with this documentation (https://www.datarobot.com/wiki/cross-validation/ ) when DataRobot performs 5-fold cross-validation, 5 models are made.

Which of the 5 models becomes the "final" model? Is it the model that has the best performance on the validation set?

Then, in the Evaluate > ROC tab how are the counts in the confusion matrix assigned for the Cross Validation set? Is this based on all 5 of the models generated during the training process? If so, how is that representative of how the "final" model really performs?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hey @bioinformed,

In the ROC tab the confusion matrix will show all the predictions from cross validation, if you had 5 fold cross validation there should be 5x more predictions in total when compared to TVH. Why CV provides a more representative score is explained here in the DataRobot Docs. Essentially CV allows you to see how the model train/validates on all your data not just whatever data ends up in those sets initially. This benefit is profound in small datasets as they are more likely to be imbalanced/unrepresentative of the environment.

I don't know which model ends up being used. Although typically DataRobot recommends that once you have chosen model train it 100% of the data before deployment creating the final model:

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Thanks, @IraWatt. I do understand the benefits of using CV. I'm just trying to figure out the details around DataRobot's implementation.

According to the link I posted in the original question, 5 models are created during 5-fold CV. I'm trying to figure out how DataRobot chooses which becomes the "final" model of the 5, and how the confusion matrix counts are determined with 5-fold CV.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

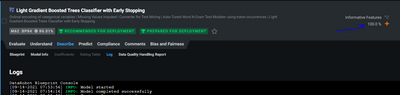

Let's say we run Autopilot mode in DataRobot. When the process is done and you go to the leaderboard you'll see all of your models have been trained and there's a validation score. These are the "final" models you are asking about. The ones that have been scored on the validation dataset.

When you run cross validation, DataRobot trains four more models, using different folds of the data for validation. However, that first model that was trained is the "final" model.

Regarding the confusion matrix...

> Is this based on all 5 of the models generated during the training process?

Yes.

> If so, how is that representative of how the "final" model really performs?

Let me know if I an interpreting your hesitation correctly: If we have one model trained on one fold of the data, and the confusion matrix comes from five models all trained on different portions of the data, how does that really represent our one "final" model we end up choosing?

Is that an accurate restatement of your concerns?

In DataRobot, a model is a blueprint that has been applied to and trained on some data. We train one model, and then four more if we run cross validation. But in the end, we need to choose one model to ultimately put into production.

Cross validation is a great way to analyze how this particular blueprint we've trained will generalize to new data. You said you're already aware of the benefits of CV, so I won't restate them. But perhaps you'd want to tune your classification threshold based on the CV confusion matrix since that eliminates the selection bias from any one particular validation set.

But in the end, we need just one model. At DataRobot we use the one that was trained first. Since all of the folds in k-fold cross validation were randomly assigned, there's no reason to really prefer one of the five models trained in cross validation over any of the others.

We do not choose the model with the lowest CV fold score, as you wondered about in your original question. If we did that we would be potentially overfitting.

Let me know if you are still wondering about anything else.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@bioinformed Those are good questions and my colleagues have provided excellent answers above. However, it may be easier for you to clarify your understanding of the platform if you attended one of the DataRobot University Foundation courses which I list below:

https://university.datarobot.com/automl-i

https://university.datarobot.com/datarobot-for-data-scientists

The instructors would also be happy to address any further queries you may have as you get to understand the platform better through the courses.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @bioinformed - Happy to see your question received so many responses from so many members. Are you still looking for help in understanding how cross-validation works within DataRobot? Please let us know! And if one of these responses provided the info you needed, please accept the solution. Thanks for sharing your questions with your peers!