- Community

- :

- Connect

- :

- Product Support

- :

- Feature Selection and Hyperparameter Tuning in Tim...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Feature Selection and Hyperparameter Tuning in TimeSeries

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Feature Selection and Hyperparameter Tuning in TimeSeries

I'm confused as to how feature selection and hyperparameter tuning work in a supervised setting with the DataRobot Platform. As a data scientist, I've recently encountered issues trying to split a dataset into (train-validation-test) and using these splits to run feature selection and hyperparameter tuning. If I use my validation set to test my feature selection methods to pick my best features, then can I use the same validation set to tweak my hyperparameters. To explain further, after I pick my best features, I use those features in a model to fit on the training set and then run hyperparameter tuning on the validation set. The problem with this is I have tweaked my features on the validation set, and I'm using those features to then tweak my hyperparameters with the validation set, I've technically already seen the validation set and optimized to it. I would then use the test set to see how my model would perform on out-of-sample data. How does DataRobot work around this problem/ what are strategies to run both feature selection and hyperparameter tuning in an ML pipeline?

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@fedeesku Hey, sorry for the delay in responding to this question.

There are a few questions about data partitioning embedded within your question:

1. General partitioning strategy for AutoML vs AutoTS problems.

2. Hyperparameter tuning on different data partitions in AutoML

3. How does DataRobot do this for Time Series problems?

And a question on Feature selection, which actually involves a few steps. If you have questions on feature selection, please let me know some additional details so I can write a targeted reply. There is actually a lot going on as relates to features in Time Series Modeling.

As I'm not sure which of those questions you're most interested in, I'll give a brief answer to each one and please follow up with whichever you'd like to know more about.

1. Partitioning strategies

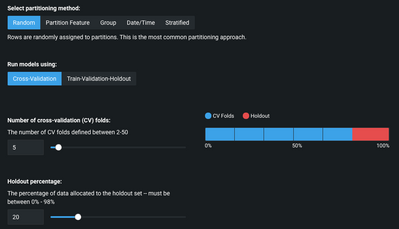

In AutoML problems (in which you data is not ordered within a single or multiple series of entries) you can use a few different partitioning strategies. By default, we use Cross-Validation + Holdout (where the holdout data is physically locked away from the modeling stages and only available once you've confirmed with a button-click that you want to unlock the holdout-data) with random distribution of data-rows into each partition. We also support Train-Validation-Holdout as you described.

These can all be controlled (number of CV-folds, number of rows in each partition, etc). We also allow you to select different partitioning strategies based on:a partition feature in your training dataset, group partitioning, stratified partitioning, or date/time partitioning. Each will work in a somewhat different way, but within all of these options you can still control the size and number of partitions.

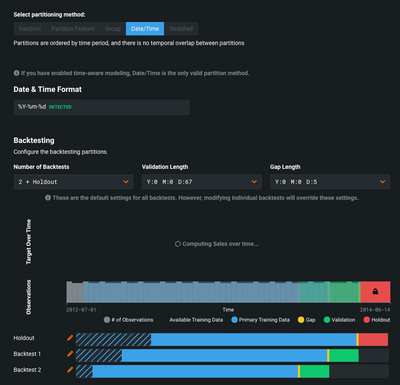

This brings up data partitioning where there is a time-component of your data that occurs in both 'Out-of-Time Validation' (OTV) and 'Time Series Modeling'. In both of those cases, you need to ensure that earlier data is used for training models and later data is used for validation of those models. In these cases, you need to use a date/time partitioning approach and we call it 'backtesting'.

You can configure the number and duration of backtests (both the validation period and the training period), the size (if any) of a gap between training and validation data, and the use of a 'holdout' backtest partition (similar to a holdout for AutoML except it uses the most-recent data). This ensures that you're getting accurate performance out of the models and not accidentally inducing target leakage by having fragments of 'future' data in the training data.

2. Hyperparameter tuning on different data partitions in AutoML.

Before we break down how hyperparameters are tuned during cross-validation, I want to summarize what CV is supposed to be used for. Cross-validation is designed to help a user make decisions about which modeling approach (we use the term 'blueprint') will generalize best. CV is an average measure of the entire modeling process, which includes the hyperparameter tuning. It is not used for selecting the best set of hyperparameters, it is used to for selecting a model. Hyperparameter tuning during CV is allowed to occur during each fold and is done on out of sample data within the training data. The set of hyperparameters that are used in the final model are determined by optimizing the performance on the validation data after fitting a model. This is equivalent to CV #1. If you want to use the full dataset for parameter tuning, the best practice is to retrain your model on 100% of the data.

3. How does DataRobot do this with Time Series Modeling or OTV?

In date/time partitioning ('backtesting') we also perform hyperparameter tuning within the training data (what we call 'backtest #1'), which is the most recent training and validation data excluding the holdout data (if it is used).

Hyperparameters are used stepping backward across the other backtests (training and validation) and notably we do not repeat the hyperparameter tuning for each backtest. Importantly, the same hyperparameters are used stepping 'forward in time' for the holdout data. This is how we check that these hyperparameters are suitable for historical data (based on performance across all backtest validations) and that they will continue to work in the future on holdout data (once holdout is unlocked).

Does that clear things up? If not, what follow up questions do you have?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@fedeesku Hey, sorry for the delay in responding to this question.

There are a few questions about data partitioning embedded within your question:

1. General partitioning strategy for AutoML vs AutoTS problems.

2. Hyperparameter tuning on different data partitions in AutoML

3. How does DataRobot do this for Time Series problems?

And a question on Feature selection, which actually involves a few steps. If you have questions on feature selection, please let me know some additional details so I can write a targeted reply. There is actually a lot going on as relates to features in Time Series Modeling.

As I'm not sure which of those questions you're most interested in, I'll give a brief answer to each one and please follow up with whichever you'd like to know more about.

1. Partitioning strategies

In AutoML problems (in which you data is not ordered within a single or multiple series of entries) you can use a few different partitioning strategies. By default, we use Cross-Validation + Holdout (where the holdout data is physically locked away from the modeling stages and only available once you've confirmed with a button-click that you want to unlock the holdout-data) with random distribution of data-rows into each partition. We also support Train-Validation-Holdout as you described.

These can all be controlled (number of CV-folds, number of rows in each partition, etc). We also allow you to select different partitioning strategies based on:a partition feature in your training dataset, group partitioning, stratified partitioning, or date/time partitioning. Each will work in a somewhat different way, but within all of these options you can still control the size and number of partitions.

This brings up data partitioning where there is a time-component of your data that occurs in both 'Out-of-Time Validation' (OTV) and 'Time Series Modeling'. In both of those cases, you need to ensure that earlier data is used for training models and later data is used for validation of those models. In these cases, you need to use a date/time partitioning approach and we call it 'backtesting'.

You can configure the number and duration of backtests (both the validation period and the training period), the size (if any) of a gap between training and validation data, and the use of a 'holdout' backtest partition (similar to a holdout for AutoML except it uses the most-recent data). This ensures that you're getting accurate performance out of the models and not accidentally inducing target leakage by having fragments of 'future' data in the training data.

2. Hyperparameter tuning on different data partitions in AutoML.

Before we break down how hyperparameters are tuned during cross-validation, I want to summarize what CV is supposed to be used for. Cross-validation is designed to help a user make decisions about which modeling approach (we use the term 'blueprint') will generalize best. CV is an average measure of the entire modeling process, which includes the hyperparameter tuning. It is not used for selecting the best set of hyperparameters, it is used to for selecting a model. Hyperparameter tuning during CV is allowed to occur during each fold and is done on out of sample data within the training data. The set of hyperparameters that are used in the final model are determined by optimizing the performance on the validation data after fitting a model. This is equivalent to CV #1. If you want to use the full dataset for parameter tuning, the best practice is to retrain your model on 100% of the data.

3. How does DataRobot do this with Time Series Modeling or OTV?

In date/time partitioning ('backtesting') we also perform hyperparameter tuning within the training data (what we call 'backtest #1'), which is the most recent training and validation data excluding the holdout data (if it is used).

Hyperparameters are used stepping backward across the other backtests (training and validation) and notably we do not repeat the hyperparameter tuning for each backtest. Importantly, the same hyperparameters are used stepping 'forward in time' for the holdout data. This is how we check that these hyperparameters are suitable for historical data (based on performance across all backtest validations) and that they will continue to work in the future on holdout data (once holdout is unlocked).

Does that clear things up? If not, what follow up questions do you have?