- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Lab: Evaluate a Regression Model

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Lab: Evaluate a Regression Model

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Lab: Evaluate a Regression Model

I'm running the Lab: Evaluate a Regression Model. I'm in the section Model Building and Evaluation. I get the first part of the exercise (running the model, finding the target leakage etc).

However when I get to Model Evaluation and Interpretation I start getting the wrong results (e.g., when I build the app, it just gives completely the wrong results). I think I'm not adapting the model set up correctly. Just to check, what changes are we expected to make compared to the baseline run? Simply remove the one feature with target leakage and rerun?

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @Inactive,

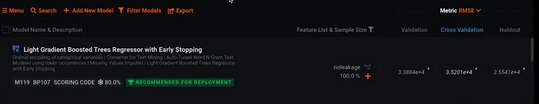

I had a look at the lab, the key step before modelling was to create a new feature list called 'noleakage' from the 'informative features' without the 'convertedComp' feature like you said. Could you show a screen shot of your leader board to see if it lines up with the tutorial?

All the best,

Ira

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Many thanks, this helps.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi Martina,

To the latter part of your query, the lab has some exercises for you to run like what-if analyses of the model outputs with different values and test if perhaps your range of input values is what you expect.

However, to evaluate the model quality (hopefully after you've removed target leakage) with the lift charts and the ROC curves to satisfy whatever metric significance level the SME deems appropriate could be one exercise in real-life.

One could also try evaluating their model interpretations.