- Community

- :

- Connect

- :

- General Discussions

- :

- Re: lift chart

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

lift chart

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

lift chart

Can someone explain how the lift chart works?

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @krista-kelly ,

Sure! Let's say you're predicting the risk of loan defaults, i.e. your target variable is did the loan default Yes or No? In that use case, you're typically most interested in the loans that default, i.e. the riskiest loans. So what you would like to see is how accurate your model is at predicting the riskiest X% of loans, not just how accurate it is overall.

A lift chart shows you how accurate the model is at predicting the risk of default from the least to the most risky. By default, the right side of the curve will be the most risky. A good model will be accurate across each level, or bin, of risk. You can use the DataRobot to adjust how many bins, or levels of risk, you have. If you have the default 10 bins then you are segmenting the loans into deciles by predicted risk level.

There's a good joke that captures what we're trying to measure: A man with his head in an oven and his feet in the freezer says on average he's comfortable.

The DataRobot documentation has a more in-depth explanation which I highly encourage you to look at as well.

Cheers,

Duncan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @krista-kelly ,

Sure! Let's say you're predicting the risk of loan defaults, i.e. your target variable is did the loan default Yes or No? In that use case, you're typically most interested in the loans that default, i.e. the riskiest loans. So what you would like to see is how accurate your model is at predicting the riskiest X% of loans, not just how accurate it is overall.

A lift chart shows you how accurate the model is at predicting the risk of default from the least to the most risky. By default, the right side of the curve will be the most risky. A good model will be accurate across each level, or bin, of risk. You can use the DataRobot to adjust how many bins, or levels of risk, you have. If you have the default 10 bins then you are segmenting the loans into deciles by predicted risk level.

There's a good joke that captures what we're trying to measure: A man with his head in an oven and his feet in the freezer says on average he's comfortable.

The DataRobot documentation has a more in-depth explanation which I highly encourage you to look at as well.

Cheers,

Duncan

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Lift charts are useful to see how a model performs across the range of its predictions. Whether a regression or classification problem, we rank order our predictions on our historical data from lowest to highest. Since we know the target labels of our historical data, we can compare our predictions with the actual target values.

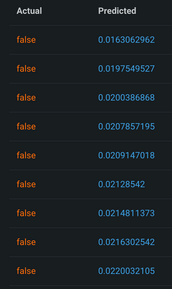

To make this easier to see on a lift chart, we group our predictions and actuals into bins and plot the average of each bin.

We can also see the actual and predicted values used to make this chart by clicking "Enable Drill Down" and clicking on the top or bottom bin.

A good lift chart has predicted and actual values close together. It also has some steepness, meaning the model makes a distinction between low and high predictions.

The lift chart lets you evaluate how the model performs across the range of predictions, from low to high. You may be more interested in a specific end of the lift chart for your use case. For example, if predicting uncommon instances of fraud, you may be more interested in performance at the high end of the lift chart when the model actually predicts fraud.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Both answers above are correct, each in its own way.

There are 2 entities that you can refer to as "lift chart" in the DataRobot.

The first one, on the "Lift chart" tab, is comparison "predicted vs actuals" binned by predicted values.

The second one, describing accuracy on top %, can be found on ROC curve tab (only for binary classification problems) - third panel with Cumulative charts. That one has 2 modes, gains (how big fraction of actuals we capture by top % predicted, i.e. if top 10% predictions contains 20% of target class we interested in it will have one of the points on X:10%,Y:20%) and lift (what is improvement over random sampling, in case above its two times improvement, so the point will be on X:10%, Y:2)