- Community

- :

- Connect

- :

- Product Support

- :

- The simple basics (Max Targets, Expand Existing Mo...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

The simple basics (Max Targets, Expand Existing Model)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

The simple basics (Max Targets, Expand Existing Model)

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Thanks Emily,

that definitely makes it clear. DR has proven to be incredibly accurate at 5 classes so i will up my game and see how the accuracy goes with more classes.

I'm about to start a 3 month data science course and hope to get a bit more insight into how to integrate into Alteryx or similar. I will see how i go and post in this community if i struggle.

Thansk for the help!

Tobe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi TobeT,

Welcome to the community!

1. DataRobot can only predict one target feature a time. This can be a continuous (regression), binary categorical (classification), or more diverse categorical (multiclass) feature.

If you had a dataset with 1000 possible targets and wanted to predict them all, then you would have to create 1000 different projects.

2. DataRobot does keep learning from new data. After you build your model, you will make predictions on new data. DataRobot will track the distribution of the training data and the new data for the top ten most impactful features. If the new scoring data looks very different from your training data, then DataRobot will flag this and notify you. This is called data drift.

So for example, if you are trying to predict loan default, and when you trained the data your firm only offered 36 and 72 month loans, and your firm adds a new loan length option, say 60 months - then this is going to change the distribution of that feature. If this is an important feature in your model, then you are going to want to detect that change and retrain your model. This allows you to be strategic about when you retrain your models.

3. When DataRobot creates a model it looks at key patterns in the data related to the target. If you want add new training data to a model then the platform will need to start at the beginning. You will do this by creating a new project.

Does this answer your questions?

Emily

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

I think I understand now TobeT, sorry for the confusion.

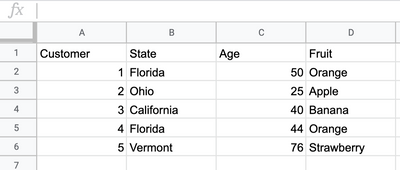

If I am correct, then you are asking how many different types of fruit could one predict if that was the categorical target feature?

See example below: the goal here is to use location and age to predict type of fruit purchase:

This would be multiclass problem, and with DataRobot you can have a target with up to 100 classes. However, the more classes you have the harder the problem becomes.

2. There are a few ways you can get predictions out of DataRobot.

The first is to use the GUI to simply upload scoring data and compute results. This is good for ad-hoc analyses, or scoring you don't have to do very often.

The second is to create a deployment with the API. You can then send the data to the deployment using a script in the integrations tab. This will allow you to track data drift. This is the most common way that people deploy models with DataRobot. We have customer facing data scientists and field engineers that could help you set something like this up.

You can also download stand alone scoring code. This will allow you to score your data off of a network or at very low latency.

Finally, I believe we have a tool where you can make predictions in Alteryx. Here is a data sheet that talks about this in more detail.

@Michael_Green might have more information about this.

I hope this helps. Please feel free to reach out with anymore questions.

Best wishes,

Emily

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Thanks Emily,

that definitely makes it clear. DR has proven to be incredibly accurate at 5 classes so i will up my game and see how the accuracy goes with more classes.

I'm about to start a 3 month data science course and hope to get a bit more insight into how to integrate into Alteryx or similar. I will see how i go and post in this community if i struggle.

Thansk for the help!

Tobe