- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Re: Time Series - COVID impacted data

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Time Series - COVID impacted data

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Time Series - COVID impacted data

Hi

I'm new to the platform so apologies if my syntax is incorrect - here to learn

I've been trying to make forecasts with some monthly data that seems to be heavily impacted by COVID. The models DataRobot are giving me seems to perform worse than a traditional TS approach (I used Excel for this) and I just wanted to know if there is a way DataRobot can provide traditional TS models only (I tried the "manual" model selection but I could not find any models other than the baseline model that resembles a traditional TS model.

I have tried tweaking the best performing models ranked according to the error metric too but I think there is insufficient data post COVID to make any reliable predictions - I stand to be corrected though.

Any help on this issue would be really appreciated 🙂

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi Matt!

Thanks for that really really informative reply, I have a way better understanding of the models DataRobot implements on the data - I will look into the model selection process further and play around with it to gain an even better understanding too.

Regarding the adjustments that can be made to help improve the chosen model, everything you mentioned in the last paragraph I initially took into consideration (varying FDW, including a calendar, making some features KIA, varying forecast period). However, I overlooked splitting up the project into two, which in hindsight I should've done as well, as they do have variable behaviour - so thank you for highlighting that point. Hopefully that does the trick in terms of improving the overall accuracy of the model.

Again, thanks so much for this great reply!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi Shai!

The baseline models are mainly designed to give you a model that uses the last known data point to predict the next datapoint. You may hear this referred to as a "naive" approach when talking to different people. There are several different options for doing a "traditional" time series approach that you could try from the repository on your data, as well as a few other options which might help.

They may not be obvious as traditional time series initial but if you look in the repository you will see models that use have ARIMA, AUTOARIMA, Mean Response Regressor, and other names in them. You can also use the icons to help differentiate these. I can explain the differences in some of the major model types which might help also.

- Baseline models are just naive models that look at the last known value, or a simple average of several last known values (such as the average of the last week).

- You will likely see a lot of models with an `XGBoost` icon in blue, or that have the name `eXtreme Gradient Boosting` with some additional functionality added on. These along with the `LightGBM` models use decision trees at each forecast distance you specify to try and make the most accurate predictions. In a lot of cases these work very well, but they are probably not what you would consider traditional.

- Another class of models you will see are `KERAS` based models. These are deep learning models, and they do incorporate a traditional regressive nature as they are based around LSTMs, but I would probably advise using a different model type unless you have a larger amount of historical data or many series to train on.

- `Eureqa` models try to learn and make predictions by creating a complex equation that can be used to predict the model.

- `Mean Response Regressors` and `Ridge Regressors` are a much more traditional way of modeling, and these will compute regressions on the data during training.

- `ARIMA` and `VAR` (you will see other variations such as AUTOARIMA, and VARMAX) are probably what you are thinking of when you think of a traditional model. These are Autoregressive models which look at historical data and use this to guide predictions for the next event that is occurring. There are quite a few different variations of these, but if you are doing modeling in Excel, there is a very high likelihood the model is an ARIMA.

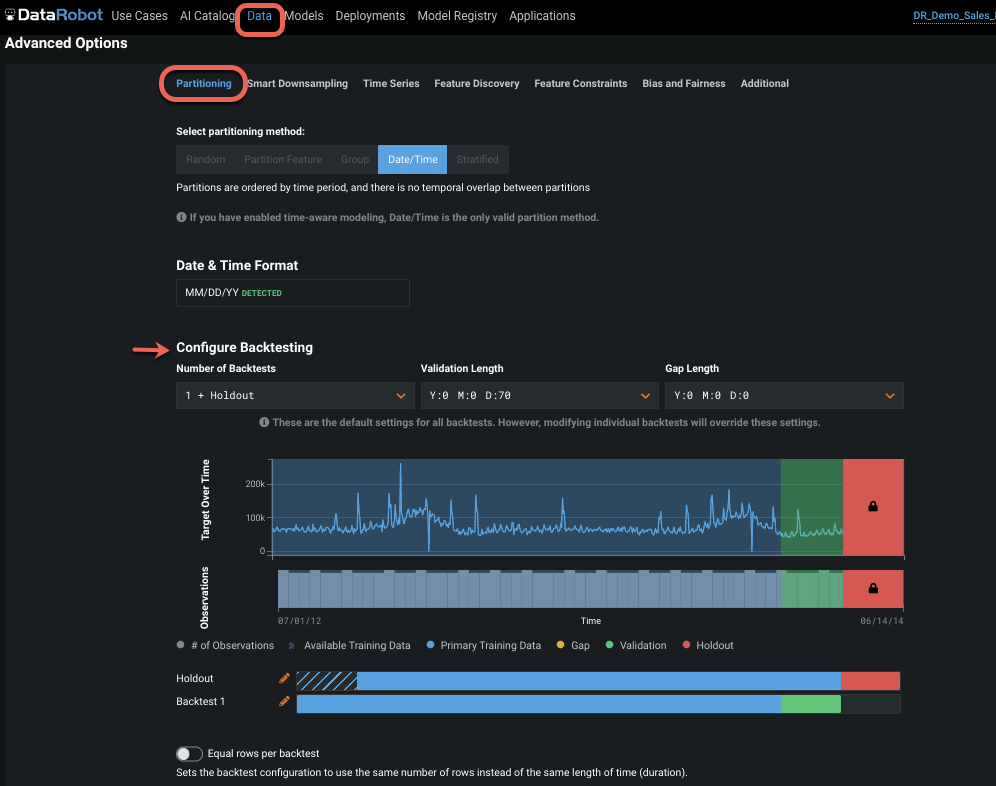

Some other things you might consider looking at which could help with training are using calendar features to indicate the start of Covid in your modeling data, or possibly removing some historical data using the backtests or trimming the data manually before loading it. This can help to prevent the model learning to much about the past if it is no longer relevant to what is happening now. In the Advanced Options at the start screen you can configure the number and length of your backtests. You can use this to make sure that your training and validation periods are relevant and that you have good coverage over the covid time window, and that it is no entirely excluded from training (e.g. all covid is in your holdout or validation + holdout) with none in training.

One final thing you may consider doing is placing series that behave very differently from one another into two separate projects. I'm not 100% sure what type of data you have, but for sales data, if you have products with very low or intermittent sales, and products with a fairly regular sales cycle, breaking the training data up and creating two projects can also lead to a lot of accuracy boost.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Looks like great information from @matt ! 🥳 You may want to have a look at this community article, Automated Time Series Walkthrough, if you haven't found it already.

Also here's a quick image to show where to configure backtesting - fyi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi Matt!

Thanks for that really really informative reply, I have a way better understanding of the models DataRobot implements on the data - I will look into the model selection process further and play around with it to gain an even better understanding too.

Regarding the adjustments that can be made to help improve the chosen model, everything you mentioned in the last paragraph I initially took into consideration (varying FDW, including a calendar, making some features KIA, varying forecast period). However, I overlooked splitting up the project into two, which in hindsight I should've done as well, as they do have variable behaviour - so thank you for highlighting that point. Hopefully that does the trick in terms of improving the overall accuracy of the model.

Again, thanks so much for this great reply!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @Linda, it certainly is a great reply.

I have actually seen this article and a few others, as well as some videos after digging around in the community forums. I think the issue was that it was a lot of information to take in initially and, because I'm still fairly new to DataRobot, I was not sure if I was missing something when things went wrong. But @matt's response really helped deconstruct what I can do to improve the model and troubleshoot problems that may occur.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Cannot get into the provided link Linda: