- Community

- :

- Connect

- :

- Product Support

- :

- Re: time to completion (urgent)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

time to completion (urgent)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

time to completion (urgent)

How does one find out how long prediction explanations are going to take to compute from the web interface?

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@IraWatt Thanks for your input. And I take your point about giving more information about the data set.

The dataset is 400k rows of data with 120 columns of mixed type -- numerical, categorical, and string. The specific model is the family of extreme gradient boosted trees. Some of the other models do vary. The main thing is that to get the time, one needs to calibrate the specific model. But, the number of one hour for 100k rows seems to be a good basic rule of thumb as a maximum time taken.

Prediction explanations would be a line by line process, so one could at least find out how far through the file the process had got. For a large homogenous file, this should be a very good indication of how much time is left modulo issues such as worker availability.

I have seen a screen shot of an older interface to DR that did actually display a completed percentage on that page. Apparently it has been removed. It certainly should not have been. It is basic information that should be available in a simple way.

The methods you spoke about I do of course already now about. They are indirect guessing. What I am looking for is a direct indicator from the horse's mouth. The take-away I have from your post is that DR no longer provides this information.

So, the method I will use in the future is simply that I will remember that it took about one hour for 100k rows, and apply that to later related runs. I might have to have a different calibration factor for each kind of model.

I hope others might benefit from this experience.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @Bruce,

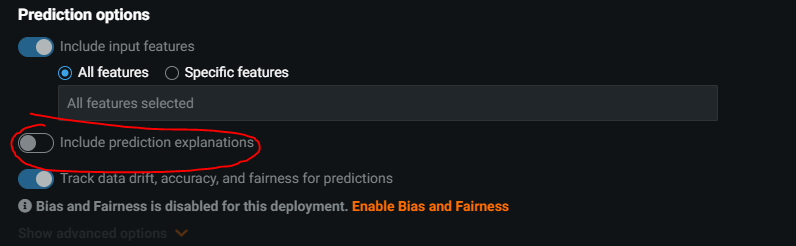

This is what I look at, but I don't know of a exact way to measure how long compute will take. If it is taking a while you could consider using a subset of the data as I believe in the models tab when getting prediction explanations it will use all the training data. In the deployment tab you can specify the amount of data to get PE on.

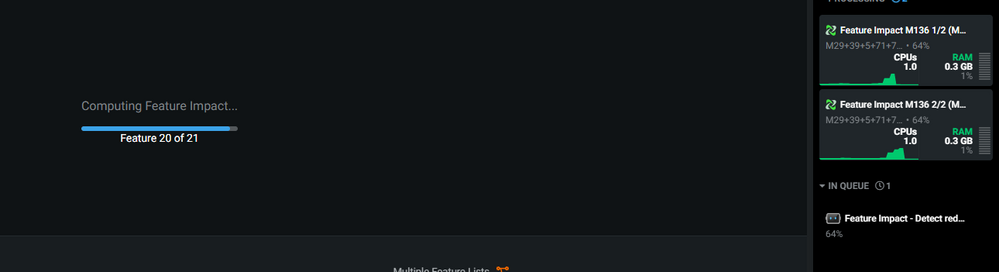

When computing prediction explanations the first stage is calculating feature impact which should give a visual indication of how many features it has processed.

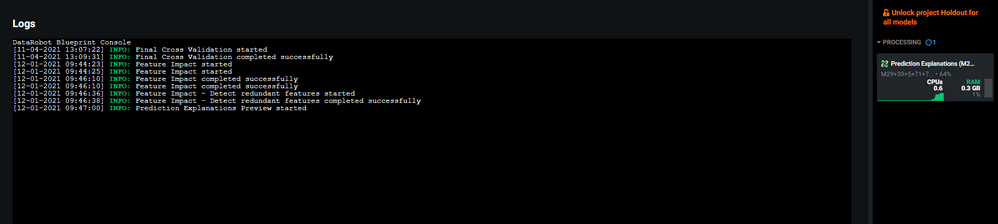

After that you are able to look at the logs by clicking on the tab in the process side bar. The logs will give an indication of how far through the PE process is but not how much time is left.

Definitely worth posting this as an idea for the platform if there isn't a more accurate way of measuring processes.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@IraWatt to save the day again! Thanks so much for this, i've got some more of my customers joining the community very soon 😄

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@IraWatt Thanks for your input. And I take your point about giving more information about the data set.

The dataset is 400k rows of data with 120 columns of mixed type -- numerical, categorical, and string. The specific model is the family of extreme gradient boosted trees. Some of the other models do vary. The main thing is that to get the time, one needs to calibrate the specific model. But, the number of one hour for 100k rows seems to be a good basic rule of thumb as a maximum time taken.

Prediction explanations would be a line by line process, so one could at least find out how far through the file the process had got. For a large homogenous file, this should be a very good indication of how much time is left modulo issues such as worker availability.

I have seen a screen shot of an older interface to DR that did actually display a completed percentage on that page. Apparently it has been removed. It certainly should not have been. It is basic information that should be available in a simple way.

The methods you spoke about I do of course already now about. They are indirect guessing. What I am looking for is a direct indicator from the horse's mouth. The take-away I have from your post is that DR no longer provides this information.

So, the method I will use in the future is simply that I will remember that it took about one hour for 100k rows, and apply that to later related runs. I might have to have a different calibration factor for each kind of model.

I hope others might benefit from this experience.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@Eu Jin Awesome : D Have to get them hooked on community badge collecting!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@IraWatt consider that feedback passed on! And yes! Community badge collection is a thing! We have plans for more ways to collect badges and more, and hope to be able to share that roadmap information soon. Please stay tuned and keep scooping up badges :):)