- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Using data outside of AI catalog for predictions?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Using data outside of AI catalog for predictions?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Using data outside of AI catalog for predictions?

I have a question about using AI Catalog. If this has been answered somewhere else, I'll take that link!

Say I use a dataset (with data up to Day n) in AI Catalog to build a time series model. Now, I received some updated data and want to test model predictions on the new data to understand if I should retrain this model with the new data (i.e., data from after Day n + 1).

Can I use data outside of AI Catalog for predictions? How?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Good question!

It is quite simple to get predictions on data from the AI Catalog, and easy to update the dataset as well.

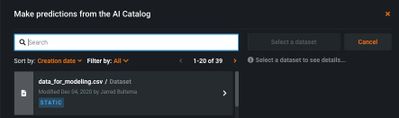

In order to get predictions from an AI Catalog-housed dataset, go to the 'Predict' tab under a model on the Leaderboard and select the 'Catalog' as the source:

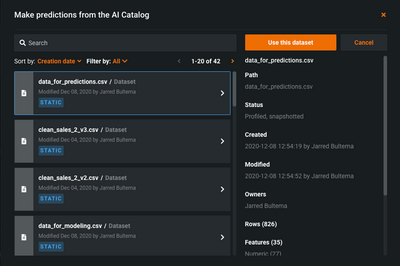

Next, simply select the dataset you want to use from the list of AI Catalog datasets you have access to:

So here, you have two choices:

1. Do you want to 'update' the dataset used for modeling and just add the new rows?

2. Do you want to create a new dataset in the AI catalog?

If you want to update the dataset you can add that several ways, but let's assume you don't have that dataset handy on your local machine.

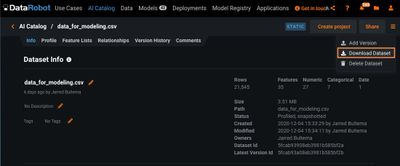

First, download the dataset from the AI Catalog:

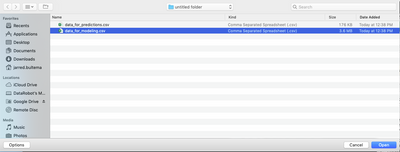

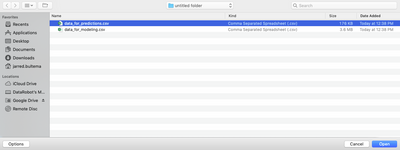

Next, make whatever changes you want and then save the file locally. Then you'll need to upload the dataset and you have two options.

If you've given the dataset a new name and/or it is just the new rows you want to score, you can use the 'Add Version' option and it will upload the dataset as a new dataset in the AI Catalog:

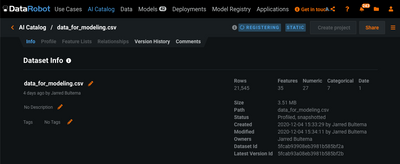

After selecting a file, it will take a moment to upload and register:

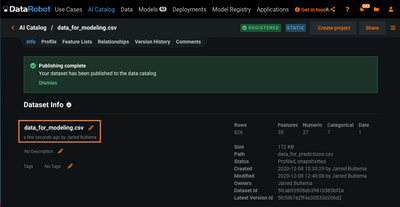

Once that has been completed, it will be available as a new dataset in the AI Catalog with the new file name. Notice that the last-updated shows this file was just changed:

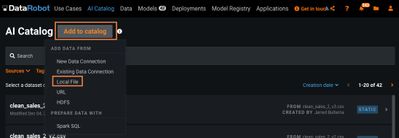

Option 2: create a new dataset.

In this option, you want to create a separate prediction file (with the now updated rows). In that case you'll be giving the dataset a new name and uploading it as a new file to the AI Catalog:

Select the new file and upload:

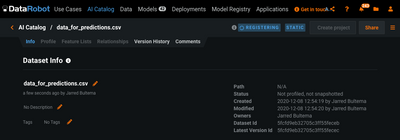

This is a new entry in the AI Catalog, with the default name assigned from the filename.

After this uploads and registers in the AI Catalog, it will be available under the 'Predict' tab, Catalog:

Hopefully this answers your question. Please feel free to follow-up if you have other questions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

You may find it advantageous to leverage the AI Catalog, although there are several additional options to score new data as well.

One is to score data on your worker nodes - typically this is done during test/evaluation phases when deciding which model on a leaderboard to take forward into a deployment and production workflow. Within a project, you could navigate to Models -> model on the leaderboard -> Predict -> Make Predictions. From here you can upload up to a 1 GB file and have it scored in an asynchronous job (you'll have to check if it's completed, and DataRobot will complete it when resources are available.)

Another option is to score it via a dedicated scoring server, via a deployment. This deployment will have additional advantages as well; DataRobot will provide you operational statistics about the performance of the deployment, as well as monitor it for data drift and target drift. If you upload actuals, you can also view accuracy as well. This will allow you to understand how your model is performing over time and may be decaying, and whether you should consider retraining and replacing it.

For this option, you'll have to make a deployment; once highlighting that model of interest on the leaderboard, select Predict -> Deploy -> Deploy Model. I would advise toggling the drift tracking on. If you are ready/planning to add actuals, you can also populate the feature name of the unique ID you'll be sending in; you can always toggle this on later as well. For a quick try at this, you may want to toggle it off for now and proceed to hit the Create deployment button.

This process will register the model as a deployment, and it will now show up under your Deployments tab. The model is available to score data through via a real time Prediction API and our Batch Prediction API that wraps it. A GUI scoring option is also available under the deployment, similar to the scoring option available on the model directly on the leaderboard.

Navigate to Deployments and your newly deployed model. There is a Predictions sub-tab available - there is a Make Predictions option here to interact with. If interested in the API options, look at the Prediction API tab. For automated / scheduled / programmatic / command line csv scoring, it's command to use one of the Batch scoring options. I would generally recommend the generic CLI tool, which you can download a python or powershell version of. Some examples of using the CLI script are available here.