Turn on suggestions

Auto-suggest helps you quickly narrow down your search results by suggesting possible matches as you type.

Showing results for

- Community

- :

- Connect

- :

- Product Support

- :

- What do the columns returned from my Predictions m...

Options

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

What do the columns returned from my Predictions mean?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

05-25-2022

10:23 AM

What do the columns returned from my Predictions mean?

Question:

"I just ran a model and tested the predictions on the training data - can you explain what the columns mean?"

"I just ran a model and tested the predictions on the training data - can you explain what the columns mean?"

Solved! Go to Solution.

1 Solution

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

05-25-2022

09:40 PM

Answer:

When you're in DataRobot and in the "Make Predictions" section, you can either:

When you're in DataRobot and in the "Make Predictions" section, you can either:

- Load a brand new dataset that DataRobot has never seen before to predict values for, or

- You can use the training data to see how the model will predict against it. (I can see that this is what you've done because you have some additional columns in your results, let me explain what they mean).

Expanding on this, when you use training data to get prediction results it's a little "cheaty" in the sense that the model has already seen the data before and knows most of the results already (ie whether someone actually exited the network or not).

Taking a step back, when you upload a data set into DataRobot it will usually split the data into 5 "folds" or equally sized pieces (you can control the number of "folds" in the advanced settings). It will also set aside a SIXTH chunk of data aside called the "Holdout" which DataRobot doesn't get to see and is usually 20% of the data...(This holdout is the data that DataRobot will use as its "final exam" for the models that it trains.)

So for example if you uploaded 1000 records for the purposes of training a model, by default it would be split into

- 5 x 160 records = 800 records for training and

- 1 x 200 records in the holdout

This is all customisable in the Advanced Options:

Then, with 5 of these folds of data it will use them iteratively to identify the best model. The process goes something like:

- You tell DataRobot you want to predict "exits" and upload the training data.

- DataRobot can tell that this is a binary classification problem and selects a number of modeling approaches and "blueprints"

- The training data is split as I have described above - ie 6 pieces - 5 for training and 1 for the holdout

- It will start with the first "fold" of 160 rows and use this with all of the 20 or so models it has selected and will see how it performs... Think of this like a competition or survival of the fittest...

- If the model performs well enough on 16% of the data , it will be then be given the next 16% to train on to improve the results

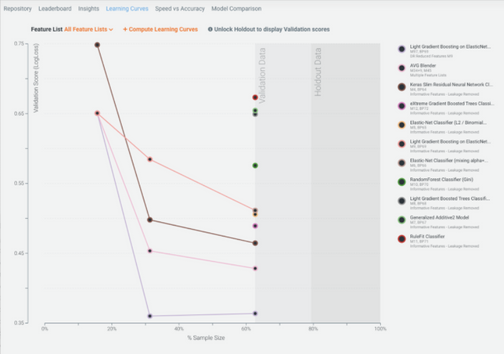

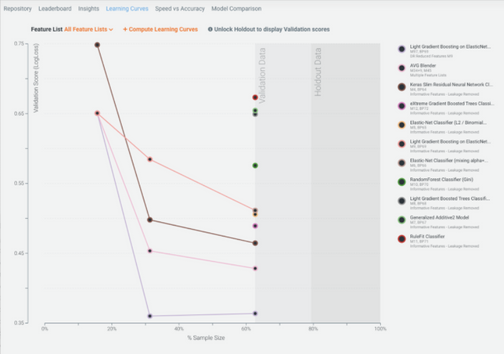

- and so on.... until you get to using 80% of the data... (below i added a screenshot that shows the process of improvement of a few of the models (as the models get access to more data they improve, but we don't bother giving more data to the crappier performing models)

- As a last step, you can then use the holdout to really test your model.... If you want to do that for all of the models you need to press the "Unlock project Holdout for all models" button :

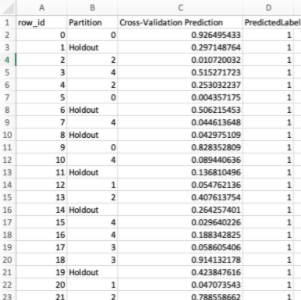

- Row ID - the row of the training dataset

- Partition - Which "fold" that row was in

- Cross-Validation Prediction - The score that the model scored the row

- PredictedLabel - This will be affected by what you've set your threshold to (and what the Cross-Validation Prediction) as to whether it's considered "Likely to Exit" or "Not Likely to Exit"

1 Reply

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

05-25-2022

09:40 PM

Answer:

When you're in DataRobot and in the "Make Predictions" section, you can either:

When you're in DataRobot and in the "Make Predictions" section, you can either:

- Load a brand new dataset that DataRobot has never seen before to predict values for, or

- You can use the training data to see how the model will predict against it. (I can see that this is what you've done because you have some additional columns in your results, let me explain what they mean).

Expanding on this, when you use training data to get prediction results it's a little "cheaty" in the sense that the model has already seen the data before and knows most of the results already (ie whether someone actually exited the network or not).

Taking a step back, when you upload a data set into DataRobot it will usually split the data into 5 "folds" or equally sized pieces (you can control the number of "folds" in the advanced settings). It will also set aside a SIXTH chunk of data aside called the "Holdout" which DataRobot doesn't get to see and is usually 20% of the data...(This holdout is the data that DataRobot will use as its "final exam" for the models that it trains.)

So for example if you uploaded 1000 records for the purposes of training a model, by default it would be split into

- 5 x 160 records = 800 records for training and

- 1 x 200 records in the holdout

This is all customisable in the Advanced Options:

Then, with 5 of these folds of data it will use them iteratively to identify the best model. The process goes something like:

- You tell DataRobot you want to predict "exits" and upload the training data.

- DataRobot can tell that this is a binary classification problem and selects a number of modeling approaches and "blueprints"

- The training data is split as I have described above - ie 6 pieces - 5 for training and 1 for the holdout

- It will start with the first "fold" of 160 rows and use this with all of the 20 or so models it has selected and will see how it performs... Think of this like a competition or survival of the fittest...

- If the model performs well enough on 16% of the data , it will be then be given the next 16% to train on to improve the results

- and so on.... until you get to using 80% of the data... (below i added a screenshot that shows the process of improvement of a few of the models (as the models get access to more data they improve, but we don't bother giving more data to the crappier performing models)

- As a last step, you can then use the holdout to really test your model.... If you want to do that for all of the models you need to press the "Unlock project Holdout for all models" button :

- Row ID - the row of the training dataset

- Partition - Which "fold" that row was in

- Cross-Validation Prediction - The score that the model scored the row

- PredictedLabel - This will be affected by what you've set your threshold to (and what the Cross-Validation Prediction) as to whether it's considered "Likely to Exit" or "Not Likely to Exit"