- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- what is done for unbalanced data?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

what is done for unbalanced data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

what is done for unbalanced data?

Hi all - How do you all deal with unbalanced data? What adjustments do you make to balance it? And can someone explain what techniques DataRobot applies to the unbalanced data?

Appreciate all the help

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

DataRobot recognizes different types of unbalanced data. And have multiple approaches to deal with it.

For binary and zero-inflated data DataRobot might recommend Smart Downsampling

For regression problems (when data is skewed) DataRobot recommends metrics that fit it better.

Also while creating models it is possible to set weighting parameter that might compensate for unbalanced data.

Also one can compare models after being built with metrics tolerant to balance issues, for example, AUC for binary classification, or Gini Norm for regression.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Just to expand on what my colleague said. DataRobot doesn't automatically deal with unbalanced data. However, it does provide Smart Downsampling to deal with unbalanced data. DataRobot doesn't automate this step, because how much of the majority class to downsample is dependent on the problem at hand.

Here are the steps:

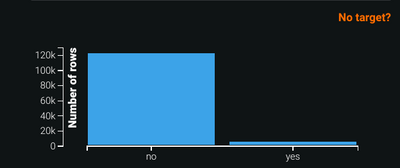

Let's assume you have the following unbalanced class.

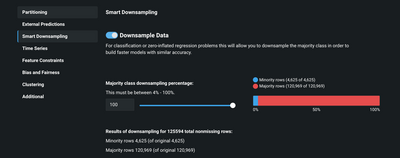

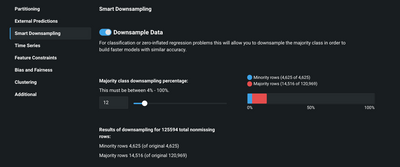

1. You can go to SmartDownSample. To activate downsampling you need to first slide Downsample Data to on. As you see the ratio between the majority and minority class is over 20X

2. Now, you can change the majority class downsampling percentage. You will notice as you move the slide, you will be provided the new size for the majority class. However, by doing so you have distorted the original ratio between the majority and minority. To rectify this issue, DataRobot creates a weight feature. The weight feature is equal to 1 if the target is equal to the minority class, else it is set to the ratio between the original count of the majority class and the count of the majority class after downsampling. So, in this case, it is equal to 120,969/14,515 or 8.33.

Note: it is important to point out that the downsampling is performed on all sets, ie. even on the holdout. You will also, notice that the metrics in the leaderboard all are prefixed by with word Weighted

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Pb12, would also like to recommend that you take the DataRobot Deeper Dive class linked below to further elaborate on what my colleagues have posted above on unbalanced data, for example what sort of partitioning scheme would/should be used as well as the smart downsampling.

https://university.datarobot.com/datarobot-deeper-dive

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Instead of messing with my data, I prefer to just adjust the prediction threshold of my models. I use the confusion matrix on the ROC Curve tab of my model to choose a threshold that compensates for the imbalance in my dataset. I like to assign a cost or benefit to all four quadrants of my confusion matrix and then choose a threshold that maximizes my profit/minimizes my losses. Let me know if you'd like me to elaborate further.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

My colleague, Andrew, has brought up an excellent point pb12. The why of your ML task as opposed to the how is also a key consideration. Andrew's points about the payoff & confusion matrices are also covered in our AML I and DataRobot for Data Scientists classes listed below:

https://university.datarobot.com/automl-i

https://university.datarobot.com/datarobot-for-data-scientists

Those and other topics such as model improvement and understanding are covered in the classes and you can also clarify with the instructors as well.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

If the dataset is very large (in the GBs, or exceeding file import limits), with a very small minority class (think 0.1% or even lower), it might also be worth considering downsampling outside of DataRobot in order to reduce training time. In this case, you would create an extra weighting feature, upweighting the non downsampled data, by the inverse of the downsampling ratio.

For example, downsampling the positives by a factor of 100:

ID, feature1, feature2, .., featureN, outcome, weight

1, x11, x12, .. , x1N, 0, 100

2, x21, x22, .. , x2N, 0, 100

.

k, xk1, xk2, .. , xkN, 1, 1

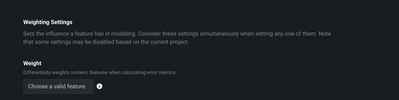

then, in 'Advanced Options' --> 'Additional', input the weighting feature (in our case, 'weight') before kicking off the project.

(DataRobot file size limits)