- Community

- :

- Learn

- :

- Tips and tricks

- :

- Re: Tip for accessing transformed data by using Co...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Tip for accessing transformed data by using Composable ML

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Tip for accessing transformed data by using Composable ML

Let’s assume that you have just completed the AutoPilot modeling process for one of your projects in DataRobot. Now, you are curious about the preprocessing steps that DataRobot has automatically selected and you would like to access the transformed data of a given preprocessing step.

Likewise, you believe that you can get an accuracy uplift of your top model from the leaderboard by modifying some of the existing processing steps but you want to review that the output of the pre-processed step (i.e., the transformed data) is as you expect.

You can easily access the transformed data from any model of the leaderboard and regardless of the two modeling contexts discussed above by leveraging Composable ML. The step-by-step process is described below

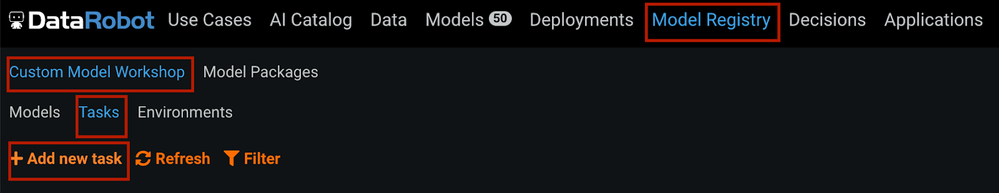

Step 1. Create and upload a Custom Task from the Model Registry tab. More information on Custom Tasks can also be found in DataRobot public github repository.

Basically, we need to create a custom.py file that downloads the output of any preprocessing step in a csv format file. For this, we can use the following code within the fit hook function. The transform hook function returns the transformed data.

from typing import List, Optional

import pickle

import pandas as pd

import numpy as np

from pathlib import Path

def fit(

X: pd.DataFrame,

y: pd.Series,

output_dir: str,

class_order: Optional[List[str]] = None,

row_weights: Optional[np.ndarray] = None,

**kwargs,

) -> None:

output_dir_path = Path(output_dir)

if output_dir_path.exists() and output_dir_path.is_dir():

#output all input training data into a csv so it can be downloaded via Artifact download

X.to_csv("{}/transformed_data.csv".format(output_dir), index = False)

#create an empty artifact file to satisfy drum requirements

with open("{}/artifact.pkl".format(output_dir), "wb") as fp:

pickle.dump(0, fp)

def transform(X: pd.DataFrame, transformer):

return X

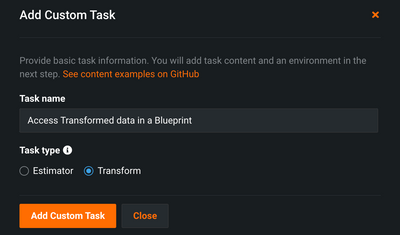

Once we have created this py file, we can upload the custom task in DataRobot as follows

Note that we have used this pre-build DataRobot’s Environment [DataRobot] Python 3 Scikit-Learn Drop-In to run the custom task but if necessary, you can indeed use your own Custom Environment.

Step 2. Modify a DataRobot-generated model to add this new Custom Task and retrain the model.

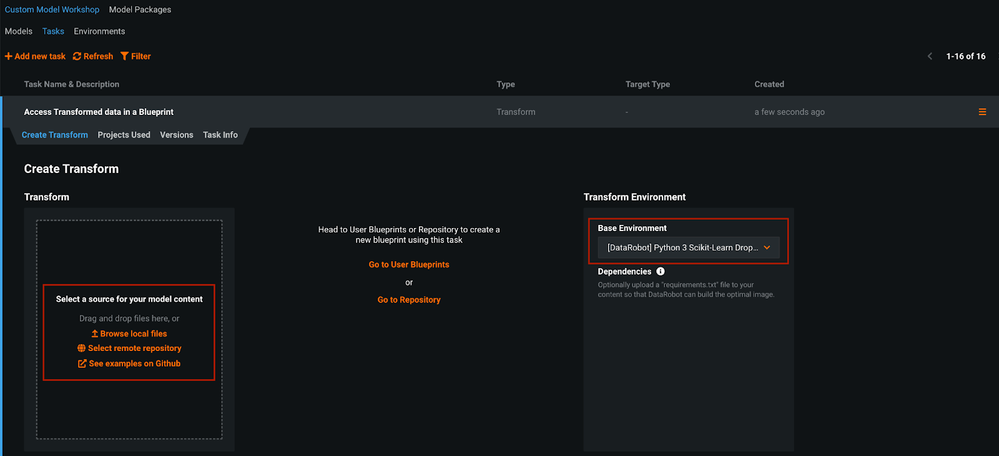

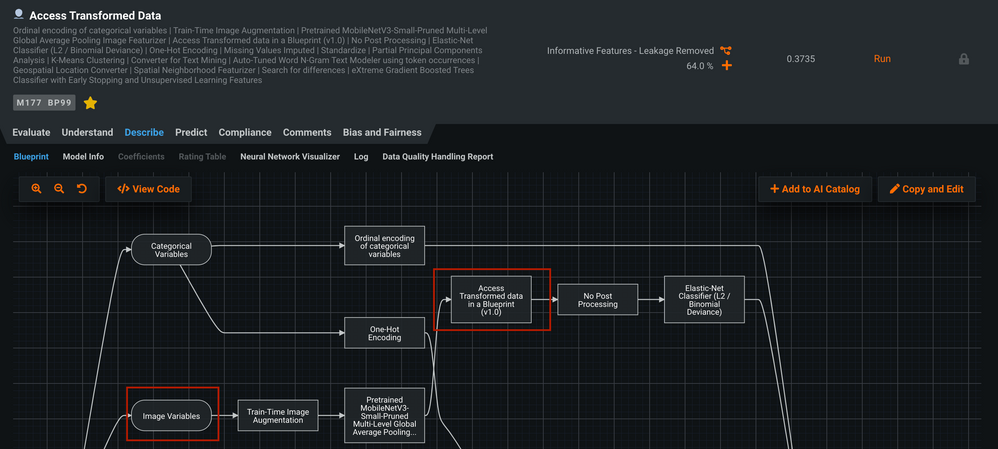

For this example, let’s assume that we would like to explore the output data of two sequential pre-processing steps (see screenshot below) that DataRobot has automatically selected to handle a Text Variable (Consumer complaint narrative) in a Multiclass ML project that aims to predict the type of Consumer Complaints.

We need first to search for a Blueprint of interest (e.g., 64% sample size version of the top model from the leaderboard) and click on copy and edit the blueprint

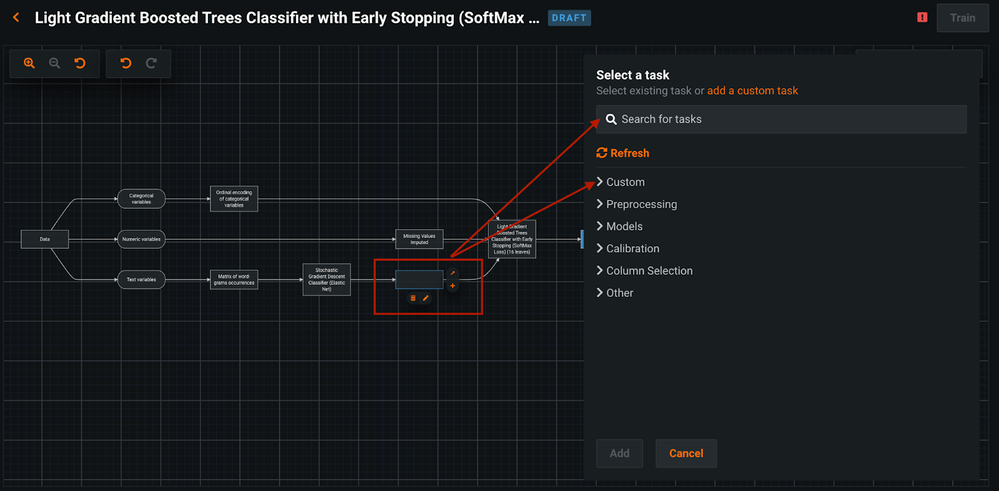

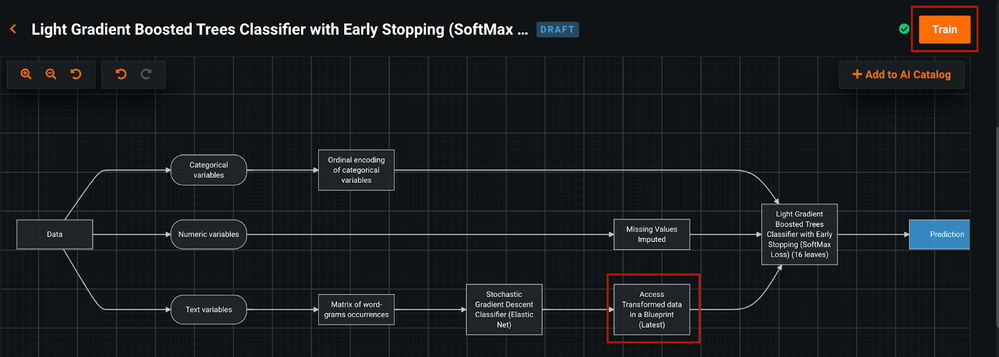

Once we have copied the blueprint we need to 1) add a new task, 2) select the custom tasks (either by typing the name of the “Access Transform data in a Blueprint” or by searching this custom task within the “Custom” group of the right menu) and 3) retrain the blueprint as the following two screenshots show:

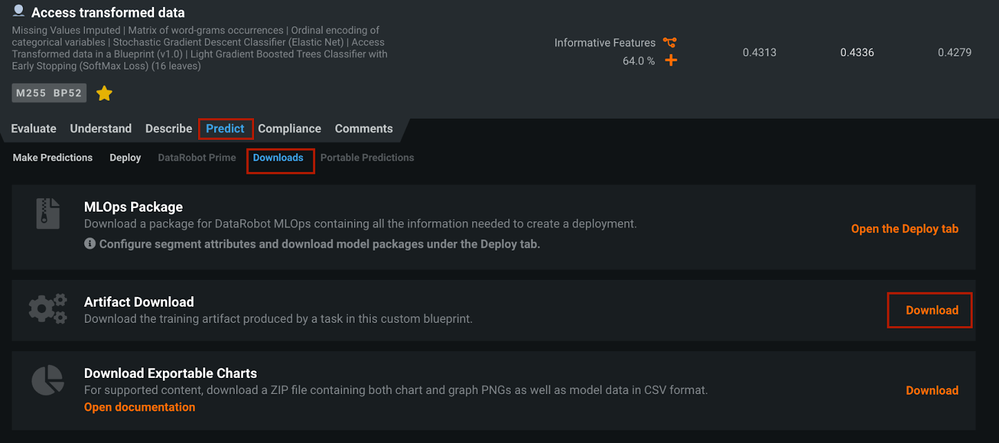

Step 3. Download the Model Artifact of the retrained model

Once the blueprint has been re-trained, we can download the Model Artifact from the Download option of the Predict tab of a given model

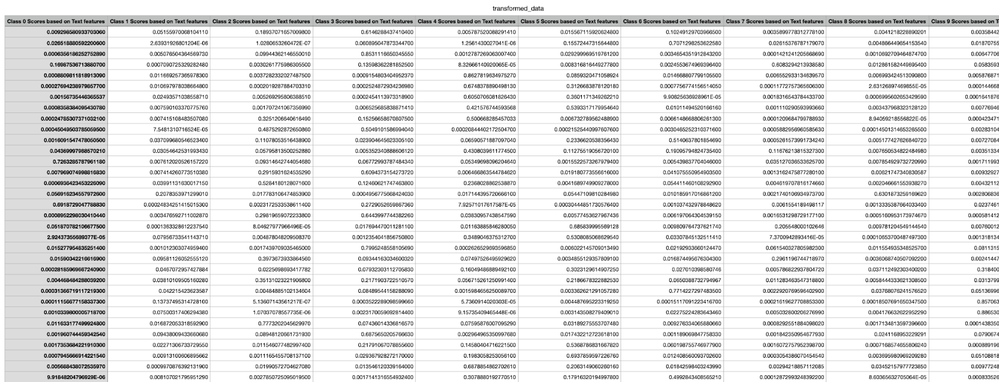

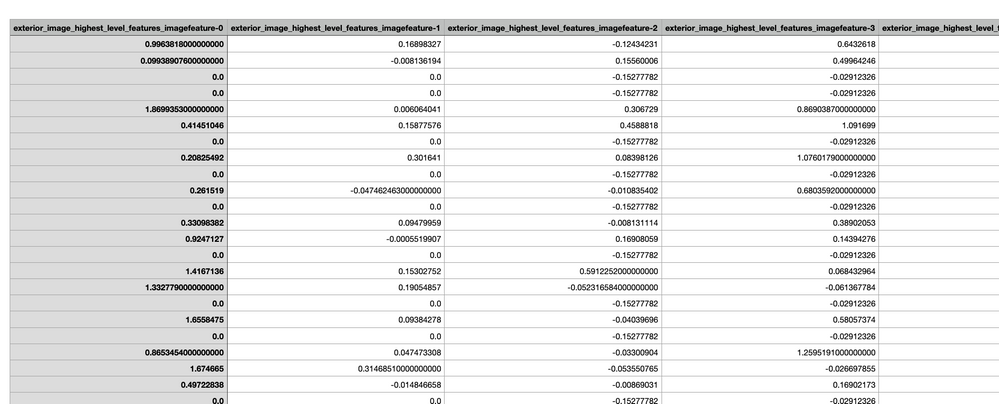

Step 4. Inspect the transformed data

After we have downloaded the model artifact for this modified blueprint, we can open the csv file and explore the transformed data as the next figure shows:

Let me know if you have further questions about this tip!

Solved! Go to Solution.

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

This is awesome. Does this also works with Image based data?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Thanks for this tip @Jaume Masip !