- Community

- :

- Learn

- :

- Tips and tricks

- :

- Tips for using DataRobot’s API for Custom Inferenc...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Tips for using DataRobot’s API for Custom Inference Models

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Tips for using DataRobot’s API for Custom Inference Models

Let’s assume that you have a custom-pretrained model and want to deploy and monitor this model in DataRobot. To this end, you can use DataRobot’s Custom Model Workshop, so you will be able to upload a model artifact to create, test and deploy custom inference models to DataRobot’s centralized deployment hub.

It’s worth mentioning that you can either use DataRobot’s GUI (Graphical User Interface) or DataRobot’s Python API Client to run all the steps related to create, test and deploy a custom inference model.

Let’s take a closer look at how we can automate all these steps using our DataRobot’s API Python package.

Step 1 Install Libraries. First of all, we will need to clone the public github repository and install DataRobot’s and DRUM libraries

!git clone https://github.com/datarobot/datarobot-user-models.git

cd datarobot-user-models

pip install -r public_dropin_environments/python3_sklearn/requirements.txt

pip install datarobot-drum

pip install datarobot

Step 2 MLDEV - Train a custom XGBoost model with scikit-learn pipeline. Now, let’s assume that we have some modeling training data and want to train a custom xgboost for a binary classification use case. We will use the scikit-learn pipeline as an example.

Let's install the Python modules (PyYAML==5.3.1 xgboost==1.2.1) we need using the requirements file

!pip install -r ./custom_model_xgboost/requirements.txt -qimport pandas as pd

import joblib

import numpy as np

import json

from xgboost import XGBClassifier

from sklearn.pipeline import Pipeline

from sklearn.compose import ColumnTransformer

from sklearn.preprocessing import StandardScaler, OneHotEncoder

from sklearn.impute import SimpleImputerWe will now build, as example, a very simple Scikit-Learn Regression model (XGBoost) to predict if a loan will default or not

train = pd.read_csv('./data/custom_training_10K.csv')

X = train.drop('is_bad', axis=1)

y = train.pop('is_bad')

train.head(5)Let’s define and fit the preprocessing step per type of feature column

# Preprocessing for numerical features

numeric_features = list(X.select_dtypes('int64').columns)

for c in numeric_features:

X[c] = X[c].fillna(0)

numeric_transformer = Pipeline(steps=[

('scaler', StandardScaler())])

# Preprocessing for categorical features

categorical_features = list(X.select_dtypes('object').columns)

for c in categorical_features:

X[c] = X[c].fillna('missing')

categorical_transformer = Pipeline(steps=[

('OneHotEncoder', OneHotEncoder(handle_unknown='ignore'))])

# Preprocessor with all of the steps

preprocessor = ColumnTransformer(

transformers=[

('num', numeric_transformer, numeric_features),

('cat', categorical_transformer, categorical_features)])

# Full preprocessing pipeline

pipeline = Pipeline(steps=[('preprocessor', preprocessor)])

#Train the model-Pipeline

pipeline.fit(X,y)

#Preprocess x

preprocessed = pipeline.transform(X)

#I could also train the model with the sparse matrix. I transformed it to pandas because the hook function in custom.py expected a pandas dataframe to be used for scoring.

preprocessed = pd.DataFrame.sparse.from_spmatrix(preprocessed)Finally, let’s train a XGBoost Classifier and save both the custom model and preprocessing pipeline in pickle files. We will then use these pickle files to upload them in DataRobot

model = XGBClassifier(colsample_bylevel=0.2,

max_depth= 10,

learning_rate = 0.02,

n_estimators=300,

eval_metric = 'logloss'

)

model.fit(preprocessed, y)

joblib.dump(pipeline,'custom_model_xgboost/preprocessing.pkl')

joblib.dump(model, 'custom_model_xgboost/model.pkl')

Step 3 Test models locally. Before uploading custom models to DataRobot, it is good practice to test models locally by using DRUM (DataRobot Model Runner tool). DRUM verifies that a custom model can successfully run and make predictions. That said, this testing is only for development purposes, so we will also need to test the custom inference models in the Custom Model Workshop after uploading the custom model to the DataRobot platform.

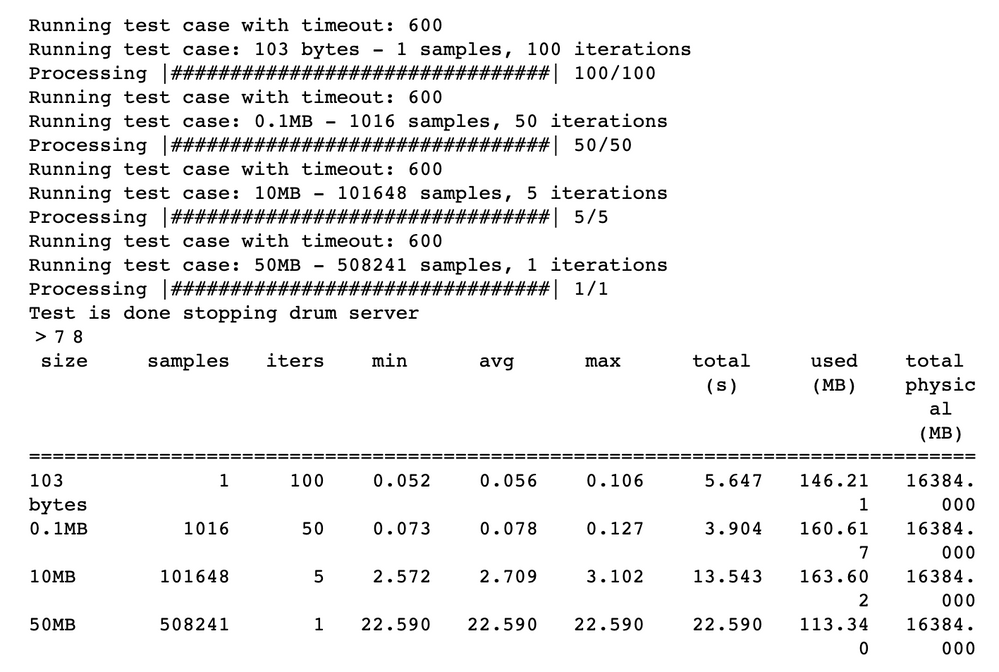

We will now use DRUM to test how the model performs by computing latency times and memory usage for several different test case sizes. A report is generated after this process is completed.

We will now use DRUM to test how the model performs by computing latency times and memory usage for several different test case sizes. A report is generated after this process is completed.

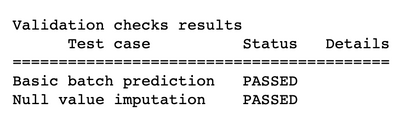

!drum perf-test --code-dir ./custom_model_xgboost --input ./data/custom_scoring_10K.csv --target-type binary --positive-class-label '1' --negative-class-label '0'We can also validate the model to detect and address issues before deployment. It’s highly encouraged that you run these tests, which are the same ones that DataRobot performs automatically before deploying models. Specifically, DRUM will now test null values imputation by setting each feature in the dataset to "missing" and then feeding the features to the model. We will send the results to validation.log and copy the outcome below:

!drum validation --code-dir ./custom_model_xgboost --input ./data/custom_scoring_10K.csv --target-type binary --positive-class-label '1' --negative-class-label '0' > validation.log!cat validation.log

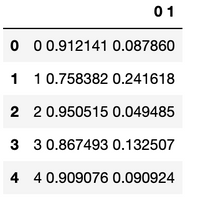

Finally, we want to use our model to make predictions; to do this, we'll leverage DRUM and its ability to natively handle our Scikit-Learn model. All we need to do is tell DRUM where the model resides and what data we wish to score.

!drum score --code-dir ./custom_model_xgboost --input ./data/custom_scoring_10K.csv --target-type binary --positive-class-label '1' --negative-class-label '0' > predictions.csvLet’s have a look at the predictions:

pd.read_csv("predictions.csv").head()

Step 4 Upload custom model artifacts to DataRobot. Once we have already tested the custom model locally, we can now upload all the artifacts to DataRobot. The ultimate aim is to deploy the model in DataRobot's centralized deployment hub.

To upload the custom model artifacts, we need to first import several packages and connect to the DataRobot application.

import datarobot as dr

from datarobot import Project, Deployment

import datetime as dt

from datetime import datetime

import dateutil.parser

import os

import re

from importlib import reload

dr.Client(config_path='config.yaml');Once we have connected to the DataRobot application, we need to get and select an Environment to run the custom inference model. Instead of creating our own Environment, we will select a pre-built Environment provided by DataRobot and modify it to add the required packages and dependencies.

# List all existing base environments

execution_environments = dr.ExecutionEnvironment.list()

execution_environments

for execution_environment in execution_environments :

#print(execution_environment)

if execution_environment.name == '[DataRobot] Python 3 Scikit-Learn Drop-In':

break

BASE_ENVIRONMENT = execution_environment

environment_versions = dr.ExecutionEnvironmentVersion.list(execution_environment.id)

BASE_ENVIRONMENT_VERSION = environment_versions[0]

print(BASE_ENVIRONMENT)

print(BASE_ENVIRONMENT_VERSION)

print(BASE_ENVIRONMENT.id)Now, it is the time to create the Custom Model Package. This will require three tasks: 1) add a new Custom Inference Model "Empty" Package, 2) add Artifacts to Assemble the Custom Inference Model and 3) update & modify Pre-Build selected Environment.

The code for each of these tasks is below:

# create new custom model

custom_model = dr.CustomInferenceModel.create(

name='Loan Default Custom - 13-05-2022 - API Python',

target_type=dr.TARGET_TYPE.BINARY,

target_name="is_bad",

positive_class_label="1",

negative_class_label="0",

description="XGboost model. Preprocess data using scikit-learn pipeline. Custom.py preprocess and score",

language="Python"

)

# Create new custom model version in DR

print("Upload new version of model to DataRobot")

model_version = dr.CustomModelVersion.create_clean(

custom_model_id=custom_model.id,

base_environment_id=BASE_ENVIRONMENT.id,

files=['./custom_model_xgboost/custom.py',

'./custom_model_xgboost/model.pkl',

'./custom_model_xgboost/preprocessing.pkl',

'./custom_model_xgboost/requirements.txt'],

)

# update dependencies

# This case there is no requirements.txt file, so we can uncomment these code lines

build_info = dr.CustomModelVersionDependencyBuild.start_build(

custom_model_id=custom_model.id,

custom_model_version_id=model_version.id,

max_wait=3600, # 1 hour timeout

)

Step 5 Test the custom inference model in DataRobot. Let’s test that the custom model is functional before it is deployed by using the environment to run the model with prediction test data.

We will first upload the inference dataset for testing predictions

df = pd.read_csv('./data/custom_training_10K.csv')

df_inference=pd.read_csv('./data/custom_scoring_10K.csv')

train_dataset = dr.Dataset.create_from_in_memory_data(df, categories = ["TRAINING"])

pred_test_dataset = dr.Dataset.create_from_in_memory_data(df_inference)

custom_model.assign_training_data(train_dataset.id)

Once we have uploaded the inference dataset we can test our custom inference model. The code used is presented below:

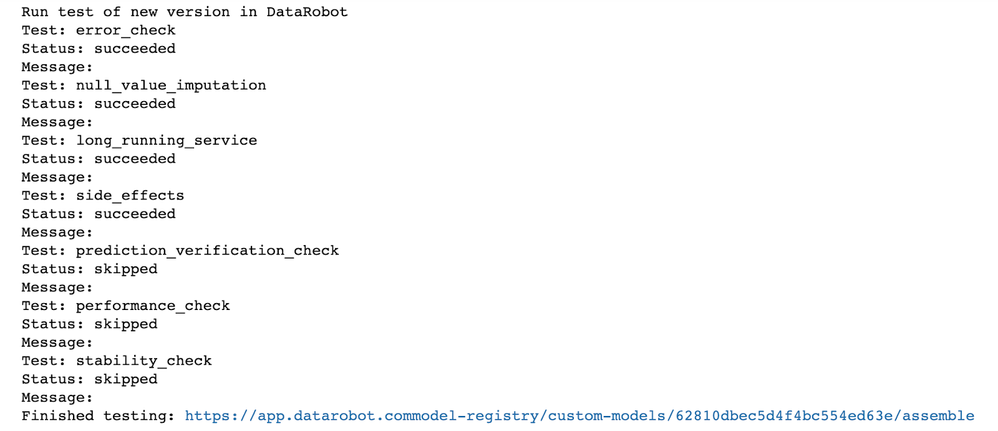

# Test new version in DR

print("Run test of new version in DataRobot")

custom_model_test = dr.CustomModelTest.create(

custom_model_id=custom_model.id,

custom_model_version_id=model_version.id,

dataset_id=pred_test_dataset.id,

max_wait=3600, # 1 hour timeout

)

custom_model_test.overall_status

#Option 1

HOST = "https://app.datarobot.com"

for name, test in custom_model_test.detailed_status.items():

print('Test: {}'.format(name))

print('Status: {}'.format(test['status']))

print('Message: {}'.format(test['message']))

print("Finished testing: "+HOST+"model-registry/custom-models/"+custom_model.id+"/assemble")And the outcomes of the different tests are shown here:

Step 6 Deploy the custom inference model. Finally we can deploy the custom inference model in DataRobot’s dedicated server. We can do this with few lines of code as it is shown below:

# Create new deployment. Uncomment lines below:

deployment = dr.Deployment.create_from_custom_model_version(

custom_model_version_id=model_version.id,

default_prediction_server_id=dr.PredictionServer.list()[1].id,

label="Loan Default predictions - Custom Model Demo 13-05-2022",

max_wait=600

)At any time of the custom inference model’s import process to DataRobot, we can use DataRobot’s GUI to inspect, if we are getting the expected outcomes too.

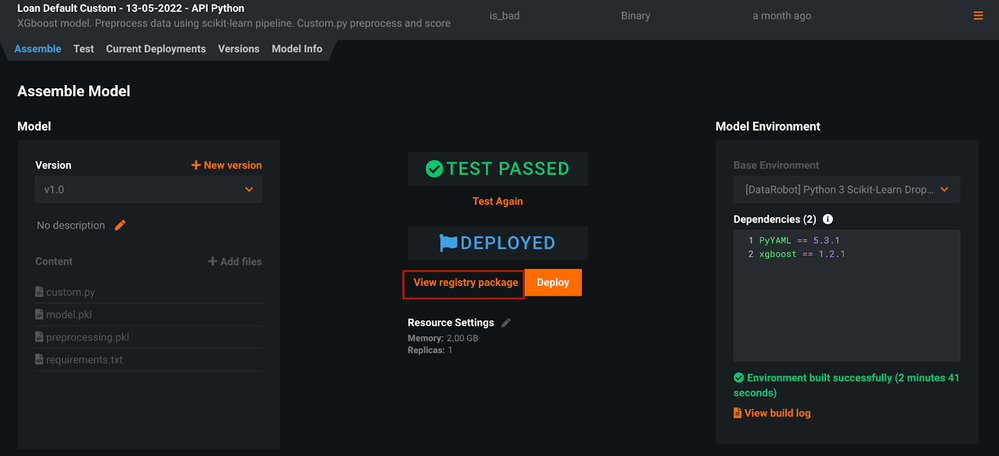

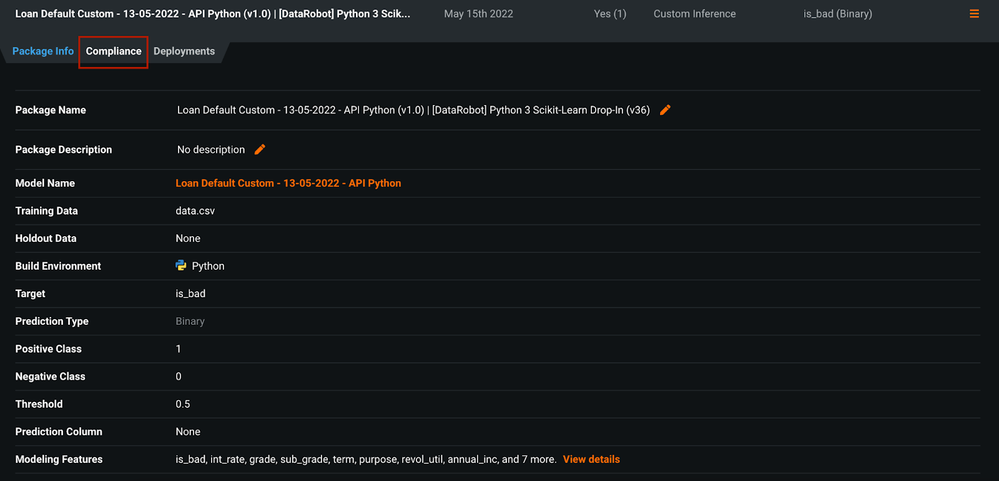

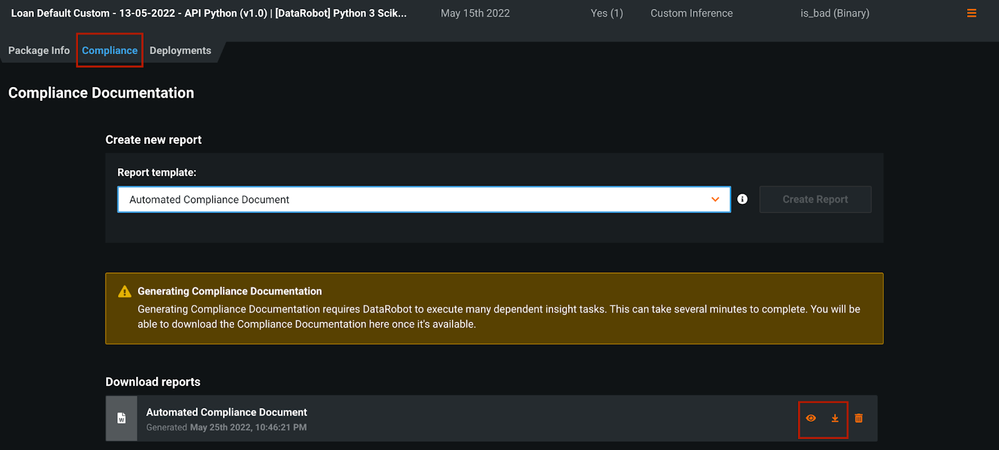

For example, the Custom Model Workshop for this custom inference model is shown below. From there, we can review that the custom model has successfully been tested and deployed. We could also generate and access the Automated Compliance report for this model, if necessary.

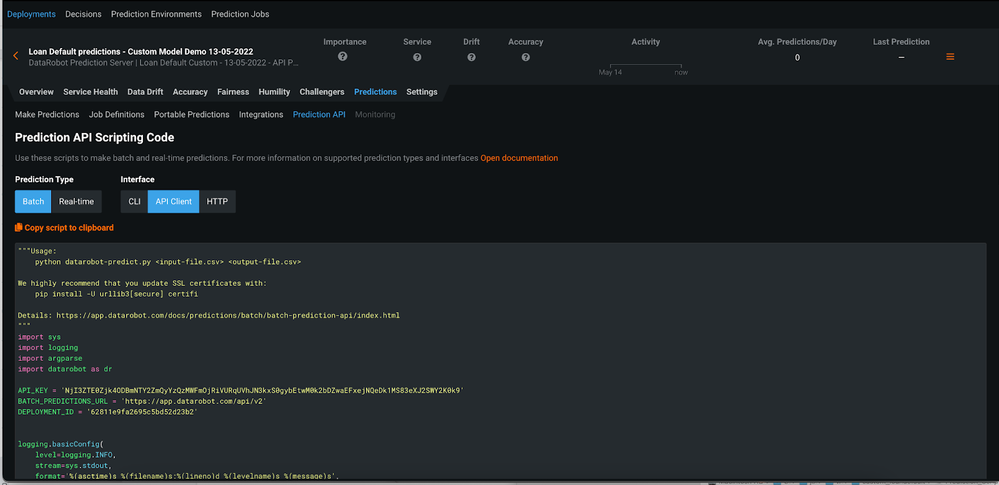

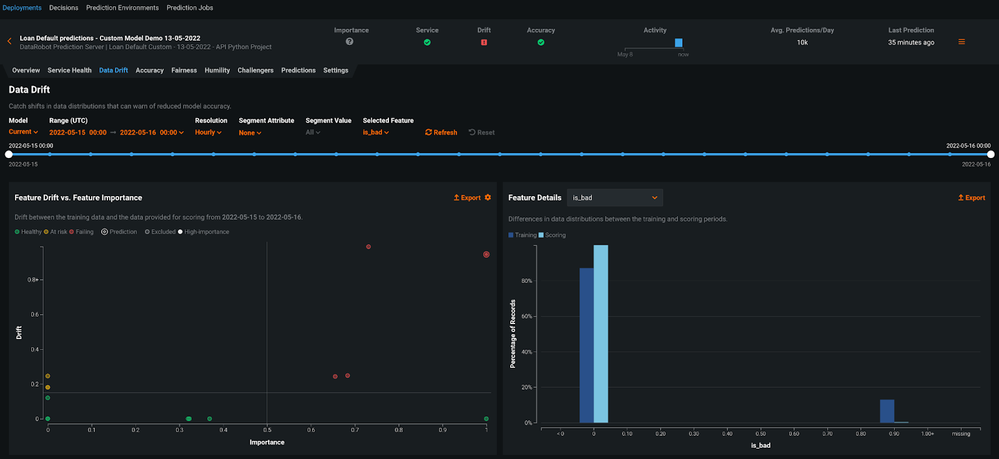

Likewise, we can explore the deployment of this custom inference model from DataRobot’s MLOPS centralized hub. From there we can start scoring the model, leveraging DataRobot MLOPS capabilities

Let me know if you have further questions about this tip post!