- Community

- :

- Learn

- :

- Tips and tricks

- :

- Use Humility Rules for Crystal-Clear Communication...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Use Humility Rules for Crystal-Clear Communications

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Use Humility Rules for Crystal-Clear Communications

When you deploy a model, it is always best to be explicit about prediction behavior. However, instrumenting a deployment to deal with corner cases often requires careful communication between Data Scientists and Software Engineers; this can be fraught with opportunities for miscommunication. To make prediction behaviors explicit and well-defined, use DataRobot MLOps humility rules.

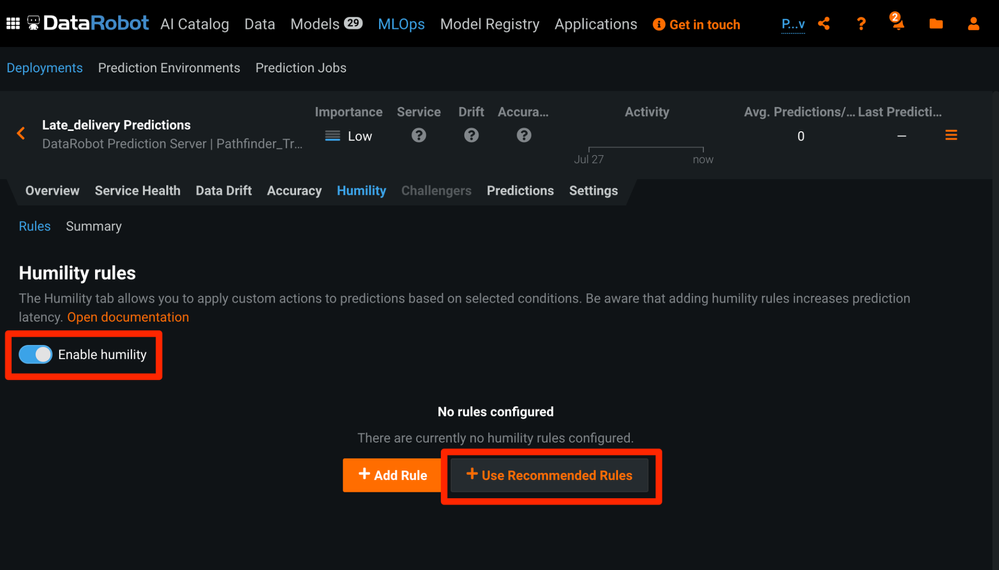

To add humility rules to a model deployment, enable them under the Humility tab.

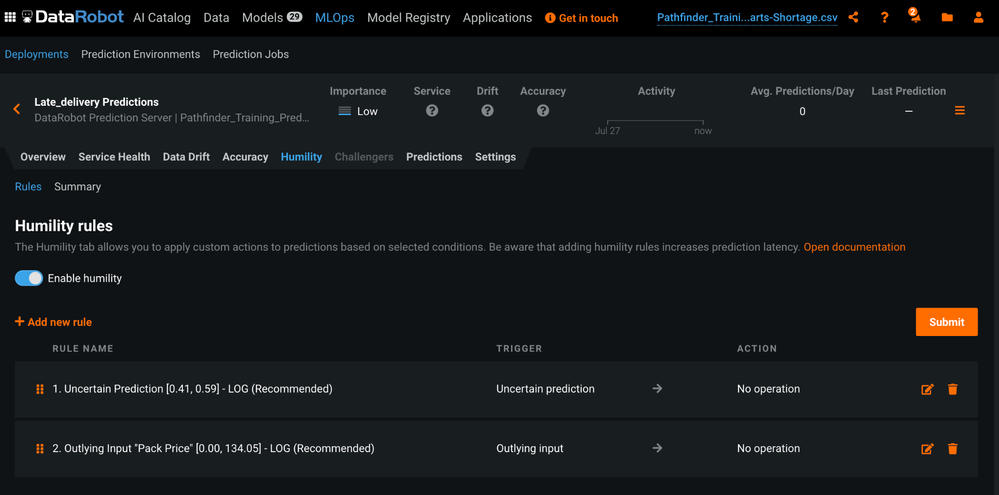

Check out the Recommended Rules first. DataRobot will analyze your chosen model and deployment for corner cases you might want to consider addressing.

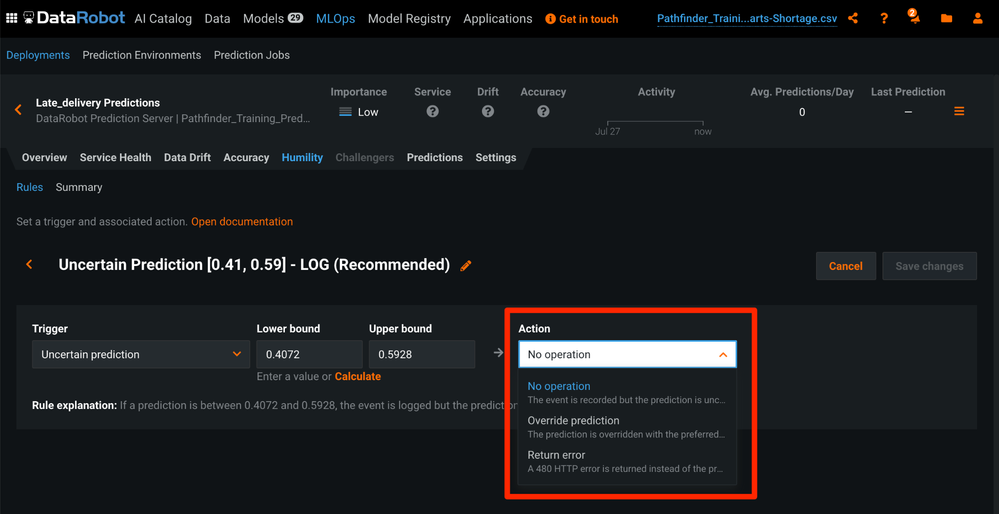

Then, deciding what you and your team want DataRobot to do in these situations is simple and straightforward. In some cases, you may simply want DataRobot to log predictions that fall into the Humility rules; at other times, you may want to override the prediction based on business rules you and your team have agreed upon.

If your model is consumed through an API integration you can even set up the rules to return an error and have your Software Developers handle that error explicitly rather than crossing your fingers and hoping everything was communicated clearly and is up-to-date.

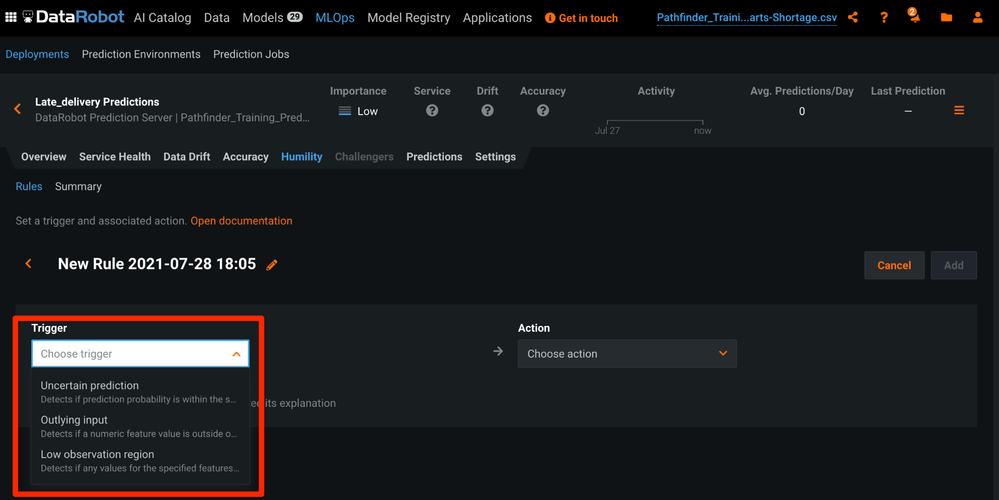

And, if you need to address other situations that may arise you can easily add another rule.

Humility rules were designed to put you in charge of how to handle common situations where predictions may not be reliable. If you’d like me to explain further, or your have more tips you’d like me to share, just comment below. I'm always interested in sharing more ways to use DataRobot. Looking forward to hearing from you.