- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Boosting specific model

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Boosting specific model

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Boosting specific model

I was wondering if there is way to only build extreme gradient boosting models. I am trying to compare a DataRobot tuned xgboost model to a regular one. The optimal SVM model seems to be overfitting and always predicts 0 and never guesses the positive class. Would this be due to the imbalance of the data? What do you recommend?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Where is the regular xgboost?

One thing you can do is external baseline comparison.

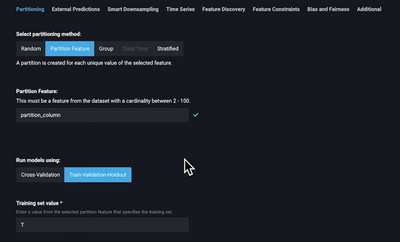

Step 1: For the model you created outside Datarobot, you need to add Paritition feature with 2 levels (T, and H) for instance, where T stands for training set, and H, holdout or testing set. The prediction values should be in another feature: let's call it xgboost_Output

Step 2: Upload the new dataset to DataRobot project

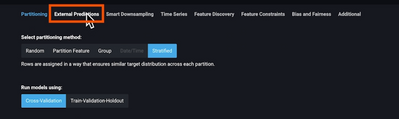

Step 3: go to Advance Options and click External Predictions

Then select the feature columns with your external model prediction xgboost_output (for instance)

Step 4: Set Partition Features

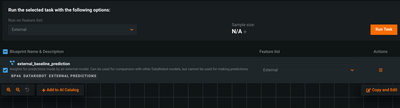

Step 5: For Auto-Pilot mode choose manual. The model will be added to the repository. Run Task

Step 6: Now, you can run autopilot quick mode for instance you should have Datarobot build models with your datasets, using your partition.

The project will have your model and all other models, you can choose other models to run.

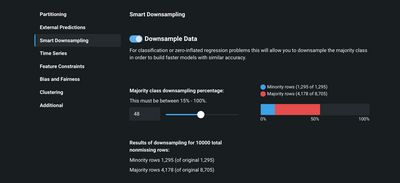

You stated that SVM is always predicting 0, what is there a percentage of each class in the dataset? If the minority is less than 20% try to downsample. Downsampling does help in these conditions and will also improve your results.

Without having access to your data or to the insight of your data, if your prediction is only 0, then it is most likely that you have extreme unbalance.