- Community

- :

- Connect

- :

- Best Practices & Use Cases

- :

- Re: Force feature data type to be non numeric.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Force feature data type to be non numeric.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Force feature data type to be non numeric.

I have a dataset in which at least one field is a postcode. Most of the postcodes are 4-digit codes, such as 1234. But, their value as a number has no meaning. At one point, I explicitly fixed the type as np.string in some of my Python code and got a type conflict error, claiming the field was numeric.

I went through an systematically made sure I specified the type everywhere - including giving project.create() a pandas dataframe instead of a csv file. But, DataRobot still insists that the field is numeric. And in the Web interface I see that DataRobot lists the field as numeric and complains about outliers.

I changed the format to 'Nx1234' so that it was not possible to interpret it as a number. And the problem went away. But, I cannot use this solution as the Post Code will be compared in other places outside this context, and I would certainly not feel happy about having to convert the format back and forth to get around a foible of Data Robot.

I conclude that even though the information in the csv file is in quotes and even though I hand over a pandas dataframe with explicit string type, DataRobot still insists on looking inside the string and concluding it is a number.

How can I stop it doing this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @Bruce,

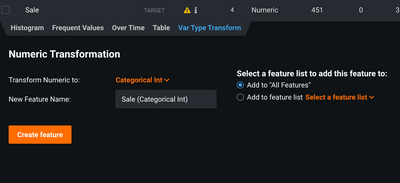

You can create a new feature as type "Categorical Int" which will treat this feature as categorical via the "Var Type Transform" tab, like so:

Or, if you are using the python library, like so: https://datarobot-public-api-client.readthedocs-hosted.com/en/v2.26.1/autodoc/api_reference.html#dat...

I hope that helps.

Cheers,

Lukas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Not an answer to the type question. But it may be beneficial to try convert your postcode to a geospatial data type rather than a string. Geospatial Data allows DataRobot to use Location AI which provides Exploratory Spatial Data Analysis features and unique modelling options for your project.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

hey @Bruce

DataRobot has inbuilt heuristics to automatically detect the appropriate datatypes, this also form part of the guardrails in DataRobot

For Australia I've found using Postcode as numeric can sometimes perform better as its a sparse column and when converted to category, can lead to overfitting.

But to answer your question, there's a programatic way to do it by calling the method "batch_features_type_transform" inside the Project class. Basically once the data gets inside DataRobot and it converts the Postcode to numeric, you force convert to category again. Its basically what @Lukas posted, when you do it via the GUI. The details can be found here on the python client documentation. I haven't tested this myself so I'll try in over the next few days too.

If you do get there first @Bruce do post the solution here, and thanks for coming to the community! =D

Thanks

Eu Jin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@IraWatt Thanks for the comment about other types for post codes, as a side issue. My response here is partly to some other comments as well.

As noted - the question was definitely about how to prevent a string with a number in it becoming numeric in Data Robot. In particular, in this case it got converted to numeric and then flagged as having outliers -- which indicates incorrect understanding of the meaning. It then proceeded to cause a data type error when the predictions were attempted.

The idea that sometimes performance can be better if the post code is a number shows a definite fault in itself. British post codes are not numbers, for example. Would converting them to a random number help. I would actually hope not as it would say there was something screwy in DR.

If it happens typing Oz postcodes as numbers did help it means that there is some real information (probably geographic) implied in the number. But, DR should have been able to extract that from the string. So, the whole thing becomes horribly contingent on the details of the implementation of DR. And it just does not smell right to me as a data scientist.

In conclusion - I agree with the principle of converting it to geospatial data - if it is not just going to be a string. Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

@Bruce I've linked the UK postcode (lat, lon) download website which should be alright to left join onto your data if you want to.

On your points, I agree with the premise DR should able to extract signal from a string or number typed postcode. However, I don't think its possible to know which type will produce the most signal as there probably is a numeric relationship between postcodes if not a perfect Interval one. If there is a numeric relationship then DR would capitalise on it allowing it to outperform the string type even with having to deal with outliers. Definitely worth using both and comparing the two types feature importance & feature-impact (Permutation-based) then creating a feature list with the best type. My guess is Geo would win against both XD.

Its a good point though that you cant predefine datatypes easily until after EDA. May save DR some overhead if you could force the type beforehand.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

This seems to be a feature engineering task that is more unique to Australia as opposed to generically for the whole world.

Your additional fix to the feature augments the data and that helps to add that piece of domain knowledge to your toolkit for analysis, and now for us as well. Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Yes, the trick can be to add some nonnumeric character before or after the number and DR will interpret it as categorical (or text if the cardinality is very high).

You can also transform within DR (see Lukas answer above).

For complex tree based methods, even if it is read in as a numeric if may well be de-facto treated as a categorical, in the sense that very fine-grained splits of a numeric variable could individuate or come very close to individuating specific numbers. Again, this would work if the cardinality is not too high, the example given (Postal codes) would likely be OK on a bigger data set.

Finally, there may be inherent data contained within the field, for example US zipcodes starting with '9' are all on the west coast, similarly Australian postal coded starting with '2' are all from NSW. So, while treating them as categorical may be better philosophically, treating as a numeric also may contain information that would otherwise not be gleamed. Could try working with both.