- Community

- :

- Connect

- :

- Product Support

- :

- Different Prediction Thresholds?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Different Prediction Thresholds?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Different Prediction Thresholds?

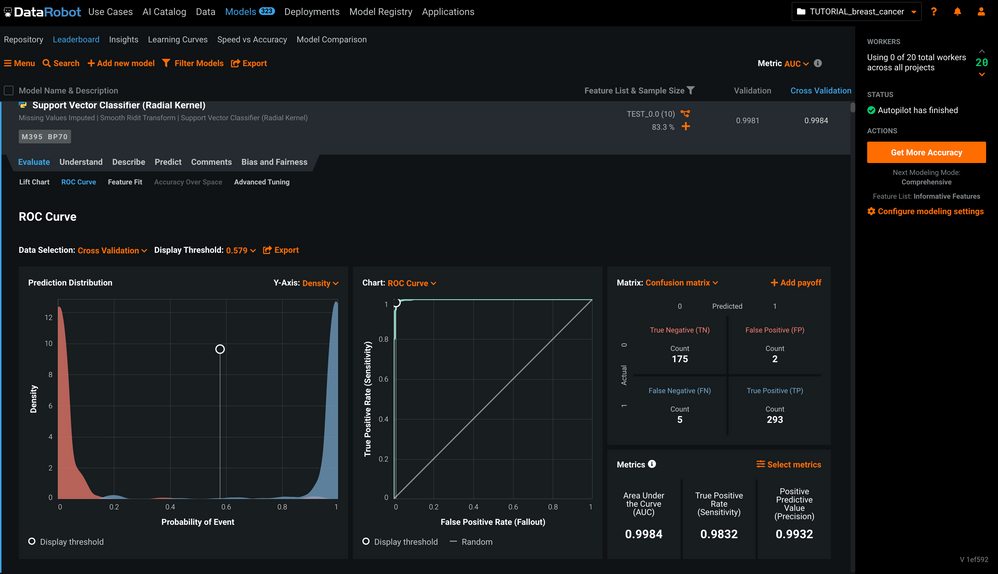

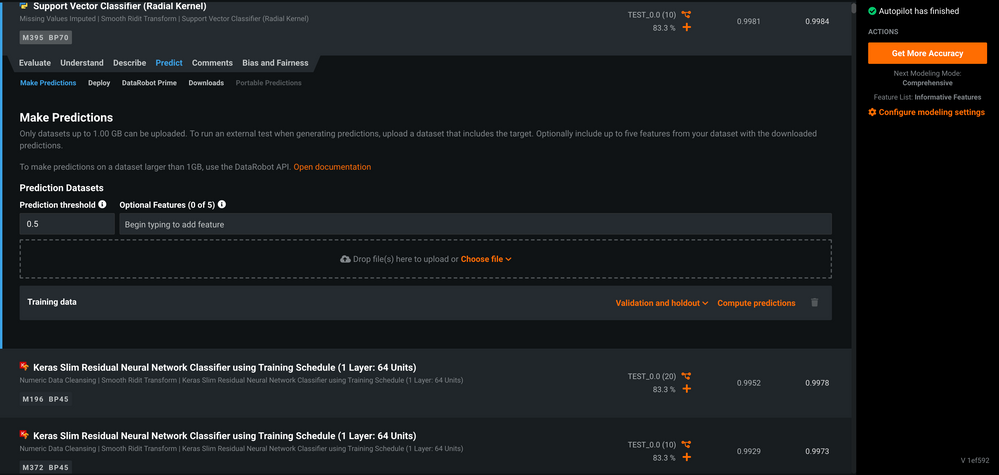

Why does the default Display Threshold under the Evaluate > ROC Curve tab differ from the Prediction Threshold under the Predict > Make Predictions tab?

Some screenshots below to illustrate what I'm seeing.

Evaluate > ROC Curve tab showing display threshold for model AUC is 0.579

Predict > Make Predictions tab showing prediction threshold of 0.5 for the same model

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @bioinformed ,

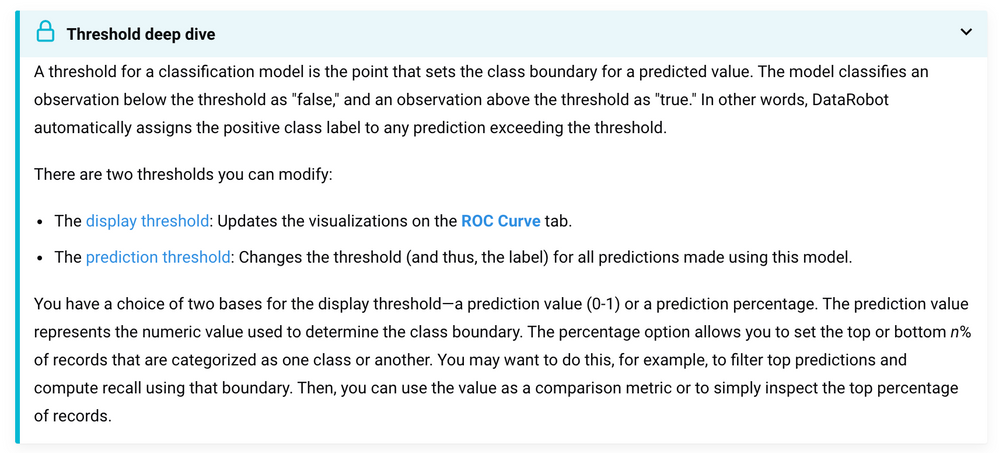

The display threshold is one you can change while you are in the validation stage of modelling. Meaning even if you experiment with different thresholds here, it doesn't affect predictions.

This is what the prediction threshold is good for. You set it, and it will be used only for the predictions part, not for your analysis.

I hope that makes sense.

Cheers,

Lukas

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @bioinformed ,

Following up to @Lukas 's response, if you would like more information about the differences between "display threshold" and "prediction threshold", take a look at the following section in the Platform Documentation:

https://docs.datarobot.com/en/docs/modeling/analyze-models/evaluate/roc-curve-tab/threshold.html#set...

More specifically, click the dropdown named Threshold deep dive, screenshot below:

Hope this helps!

Sincerely,

Alex Shoop

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Printer Friendly Page

- Report Inappropriate Content

Hi @bioinformed ,

To further elaborate on Lukas' and Shoop's points the thresholds are just set by default for the different screens like you would be in neutral if you drive a manual stick vehicle.

As they've mentioned DataRobot gives you the control and flexibility to change it at your discretion for display and prediction depending on your needs.

You may wish to attend a Virtual Instructor-Led class like Auto-ML I or the DataRobot for Data Scientists where they will cover that and more:

https://university.datarobot.com/automl-i

https://university.datarobot.com/datarobot-for-data-scientists